Top 5 Hugging Face Alternatives (2026)

On this page

Hugging Face Inference Endpoints is the paid production side of Hugging Face. You pick a model from the Hub, provision a dedicated NVIDIA GPU, and get a managed TGI deployment in a few clicks.

But you pay for that GPU whether it serves traffic or not, the hourly rate climbs fast at the H100 tier, the serving stack is locked to TGI, and closed-source frontier models aren't accessible from the same product. For plenty of workloads, an alternative with different trade-offs ends up being a better fit.

In this article, you'll learn about five Hugging Face Inference Endpoints alternatives, how they compare, and which one might be the best fit for your project.

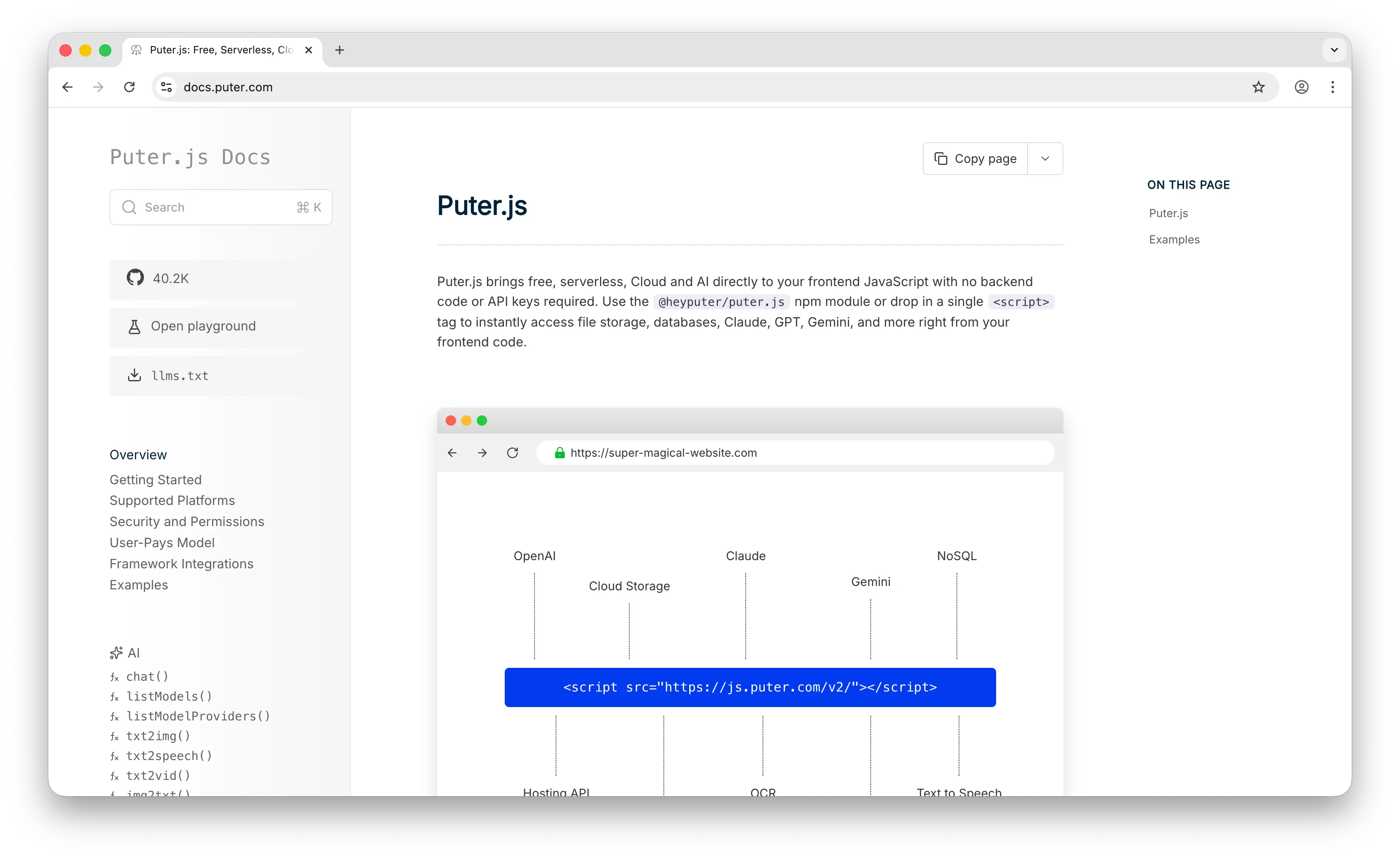

1. Puter.js

Puter.js is a JavaScript library that bundles AI, database, cloud storage, authentication, and more into a single package. It has extensive support for AI models, over 400 and growing, from providers like OpenAI, Anthropic, Google, Meta, and others.

What Makes It Different

Puter.js's defining feature is the User-Pays Model: each end user of your app brings their own Puter account, and that account is billed for the AI usage they generate. The developer integrates the SDK and ships the app, no API keys, no GPU bill at the end of the month, no backend, no infrastructure to operate. Hugging Face Inference Endpoints sits at the opposite end of that spectrum, you provision a GPU, you pay for every hour it's running, and the bill is yours regardless of whether anyone hit your endpoint that day.

The model coverage also stretches well beyond chat. Image generation, image analysis, video generation, video analysis, OCR, speech-to-text, text-to-speech, and voice changing all live behind the same client library and the same User-Pays billing. Hugging Face supports those workloads through the Hub too, but each one usually means a separate endpoint, a separate model loaded into memory, and a separate line on your monthly invoice.

Key Differences from Hugging Face Inference Endpoints

What Puter.js doesn't do is host your own custom-trained or private fine-tuned model on dedicated hardware. The catalog is curated to the 400+ pretrained models Puter has integrated, so if your workflow centers on serving a model you trained yourself from the Hub, Inference Endpoints still owns that use case. Embeddings aren't currently supported either. Observability and SLA tooling is lighter than what enterprise teams running production endpoints might rely on, and the User-Pays model is most natural for browser-side apps rather than backend-heavy server workloads.

Comparison Table

| Feature | Puter.js | Hugging Face Inference Endpoints |

|---|---|---|

| Pricing model | User-pays (free for devs) | Hourly per replica (billed by the minute) |

| Developer cost | $0 regardless of scale | $0.03 to $80+/hr per replica |

| Idle GPU cost | None | Pay while running unless paused |

| API key required |  |

|

| Backend required |  |

|

| Frontend SDK |  Native Native |

|

| Pre-built model catalog |  400+ models, one API 400+ models, one API |

All Hub models, one per endpoint All Hub models, one per endpoint |

| Custom model deployment |  |

|

| Open-source models |  |

|

| Closed-source models (GPT, Claude, etc.) |  |

Via Inference Providers (separate product) |

| Image generation |  |

Requires deploying an image model |

| Video generation |  |

Requires deploying a video model |

| Audio (TTS/STT) |  |

Requires deploying an audio model |

| Embeddings |  |

|

| Fine-tuning |  |

Via separate HF tools |

| Setup time | Single script tag | A few minutes to deploy |

| Best for | Frontend/web app devs who want zero-cost AI integration | Teams deploying specific models from the Hub on dedicated GPUs |

2. Together AI

Together AI is an inference and training platform built around open-source models. It overlaps with Hugging Face Inference Endpoints in the obvious way (both serve open-source models in production), but Together's pitch leans on inference performance, billing flexibility, and a deeper ML toolkit that extends into fine-tuning, batch jobs, and reserved GPU clusters.

What Makes It Different

Together AI offers both serverless pay-per-token inference and dedicated endpoints, which means you can pick the billing model that fits your traffic shape. Hugging Face Inference Endpoints only does hourly per-replica billing, so a low-traffic API still pays full price for an idle GPU. With Together's serverless tier, you pay only for tokens processed.

Their inference engine is also research-driven, using techniques like speculative decoding, quantization, and FP8 kernels to achieve up to 3.5x faster inference than standard deployments. Hugging Face Inference Endpoints runs vanilla TGI (Text Generation Inference) under the hood, which is solid but not as aggressively optimized. Together AI also offers batch inference at a 50% discount, GPU clusters for custom workloads, and full LoRA fine-tuning on every major Llama, Mistral, and Qwen size including the 405B flagship.

Key Differences from Hugging Face Inference Endpoints

Together AI focuses almost exclusively on open-source models and does not offer closed-source models like GPT or Claude directly. It also doesn't have the same one-click "deploy any model from a Hub" experience, you choose from their curated catalog of 200+ models. For deploying truly arbitrary custom architectures or fine-tunes from a model registry, Hugging Face's tighter Hub integration is still smoother. Pricing markup on Together varies by model and isn't transparently documented.

Comparison Table

| Feature | Together AI | Hugging Face Inference Endpoints |

|---|---|---|

| Pricing model | Per-token (serverless) + hourly (dedicated) | Hourly per replica only |

| Idle GPU cost | None on serverless tier | Pay while running unless paused |

| Serverless inference |  |

(separate Inference Providers product) (separate Inference Providers product) |

| Dedicated endpoints |  |

|

| Pre-built model catalog | 200+ open-source models | All HF Hub models |

| Custom model deployment |  |

Native Hub integration Native Hub integration |

| Inference engine | Custom (FlashAttention, FP8, speculative decoding) | TGI |

| Inference speed | Claims up to 3.5x faster | Standard TGI |

| Fine-tuning |  LoRA + full LoRA + full |

Via separate HF tools |

| Batch inference |  50% discount 50% discount |

|

| GPU clusters |  |

|

| Open-source models |  Extensive Extensive |

|

| Closed-source models |  |

Via Inference Providers |

| Image generation |  |

Requires deploying a model |

| Audio models |  |

Requires deploying a model |

| Embeddings |  |

|

| Reranking models |  |

Requires deploying a model |

| Free tier | $25 in credits | $0.03/hr minimum |

| Best for | Teams needing serverless pricing, fine-tuning, and faster inference on open-source models | Teams wanting native Hub integration with managed deployment |

3. OpenRouter

OpenRouter is a unified API gateway that provides access to 400+ models from 60+ providers through a single OpenAI-compatible endpoint.

What Makes It Different

OpenRouter is fundamentally a routing layer, not a hosting platform. Instead of provisioning a dedicated GPU like Hugging Face Inference Endpoints does, OpenRouter sends your request to whichever provider (including Hugging Face itself) can serve the model best at that moment. There's no infrastructure on your side, and no infrastructure being held warm on theirs.

It supports automatic fallback when a provider goes down, smart routing variants like :nitro for throughput, :floor for price, and :exacto for tool-calling reliability, and only bills successful runs. Pricing is passthrough from the underlying providers with a 5.5% credit fee. For developers who chose Hugging Face Inference Endpoints just to get "one API for many models," OpenRouter is structurally a better fit: one API, hundreds of models, and zero idle GPU costs.

Key Differences from Hugging Face Inference Endpoints

OpenRouter doesn't host models itself, so you can't deploy custom or fine-tuned models the way you can on Inference Endpoints. There's no Cog or container support, no private model hosting, and no fine-tuning. It's pure access to public models from existing providers. Audio (TTS/STT) and video generation are limited compared to other alternatives. If your use case is specifically "deploy this fine-tuned Llama variant on my own dedicated GPU," OpenRouter doesn't solve that problem.

Comparison Table

| Feature | OpenRouter | Hugging Face Inference Endpoints |

|---|---|---|

| Pricing model | Per-token (passthrough + 5.5% credit fee) | Hourly per replica |

| Idle cost | None | Pay while running |

| Infrastructure on your side | None | Managed (HF handles GPU) |

| Multi-provider routing |  60+ providers 60+ providers |

|

| Auto-failover |  |

|

| Smart routing (price/speed/quality) |  |

|

| Pre-built model catalog | 400+ models | All HF Hub |

| Custom model deployment |  |

|

| Open-source models |  |

|

| Closed-source models |  Extensive Extensive |

Via Inference Providers |

| Image generation |  |

Requires deploying a model |

| Video generation | Experimental | Requires deploying a model |

| Audio (TTS/STT) | Limited (recently added) | Requires deploying a model |

| Embeddings |  |

|

| Fine-tuning |  |

Via separate HF tools |

| Bring Your Own Key |  |

|

| Cold starts | None | Yes during initialization |

| Best for | Teams wanting one API for all major LLMs without managing any infrastructure | Teams deploying specific or fine-tuned models from the Hub |

4. Replicate

Replicate runs AI models on a per-second compute pricing model and is best known for its deep catalog of image, video, and audio generation models. Cloudflare acquired the company in November 2025, but Replicate continues to operate under its own brand.

What Makes It Different

Replicate's pricing is per-second of compute time, not per-hour. So if a public model takes 8 seconds to generate an image, you pay for 8 seconds. Hugging Face Inference Endpoints, by contrast, charges by the minute against a continuously running replica, so light or bursty traffic patterns end up paying for a lot of idle time.

Replicate also has one of the largest model catalogs in the industry, with 50,000+ production-ready models. Many are community-contributed via Cog, Replicate's open-source packaging format, which is roughly analogous to the role TGI and the HF Hub play together. Replicate has particularly strong image, video, and audio generation support, more polished than Hugging Face's experience for those modalities.

Key Differences from Hugging Face Inference Endpoints

Replicate's chat/LLM catalog is growing but not as extensive as what you can find on the HF Hub, and it's not the best choice if your primary workload is LLM inference at scale (Together AI, Groq, or direct API providers usually win there). The platform is built around running models on compute, not around a model registry the way the Hugging Face Hub is, so the developer experience for browsing, comparing, and downloading model weights is different. Cold starts on infrequently used models can add a few seconds to the first request.

Comparison Table

| Feature | Replicate | Hugging Face Inference Endpoints |

|---|---|---|

| Pricing model | Per-second of compute time | Hourly per replica (per-minute billing) |

| Granularity | Per-second | Per-minute |

| Idle cost on public models | None (pay only on calls) | Pay while running |

| Pre-built model catalog | 50,000+ | All HF Hub |

| Custom model deployment |  via Cog via Cog |

via Hub via Hub |

| Model publishing (by anyone) |  |

|

| Chat/LLM models | Growing, not as extensive |  Extensive Extensive |

| Image generation |  Excellent Excellent |

Requires deploying a model |

| Video generation |  Excellent Excellent |

Requires deploying a model |

| Audio models |  |

Requires deploying a model |

| Open-source models |  |

|

| Closed-source models | Some (via API partnerships) | Via Inference Providers |

| Fine-tuning |  |

Via separate HF tools |

| Embeddings | Limited |  |

| Cold start | Yes (first call after idle) | Yes (initialization) |

| Ecosystem | Cloudflare (post-acquisition) | Hugging Face Hub native |

| Best for | Media generation (image, video, audio) and community models | LLM and text model deployments with custom fine-tunes from the Hub |

5. RunPod

RunPod is a GPU cloud platform offering both serverless inference and on-demand GPU pods at significantly lower prices than hyperscalers.

What Makes It Different

Where Hugging Face Inference Endpoints is a managed serving product, RunPod is raw GPU infrastructure. You bring a Docker container (or use one of their templates for vLLM, TGI, Stable Diffusion WebUI, etc.) and they give you the GPU. The trade-off is operational: more work on your side, much lower bills.

The prices are not in the same league. RunPod's Community Cloud offers A100 80GB at around $0.89/hr, versus roughly $4 to $6/hr on Hugging Face for comparable hardware. H100 runs $2 to $3/hr on RunPod, while Hugging Face dedicated H100 is around $6.40 to $8/hr. They also offer per-second serverless billing, sub-200ms cold starts, zero egress fees, and 30+ GPU SKUs from B200s down to RTX 4090s, plus a Community Cloud tier (cheaper, spot-style) and Secure Cloud (production-grade reliability).

Key Differences from Hugging Face Inference Endpoints

You operate the serving stack yourself. Hugging Face Inference Endpoints handles model loading, TGI configuration, health checks, automatic restarts, and HTTPS termination. On RunPod, you set up vLLM or TGI, manage container images, handle scaling logic, and configure your own API layer. It's more powerful and dramatically cheaper at scale, but it's not a "one-click deploy from a model card" experience. For teams that haven't done production ML serving before, the learning curve is real.

Comparison Table

| Feature | RunPod | Hugging Face Inference Endpoints |

|---|---|---|

| Pricing model | Per-second (serverless) or per-minute (pods) | Per-minute (hourly rate) |

| A100 80GB hourly rate | ~$0.89 (Community) / ~$1.89 (Secure) | ~$4-6/hr |

| H100 hourly rate | ~$2-3/hr | ~$6.40-8/hr |

| Egress fees | None | Varies by cloud provider |

| Cold start | Sub-200ms (serverless) | Several seconds |

| Management overhead | High (you operate the stack) | Low (fully managed) |

| Serving engine | Bring your own (TGI, vLLM, SGLang, etc.) | TGI (managed) |

| Pre-built model catalog | None (you bring the model) | All HF Hub |

| Custom model deployment |  Any Docker container Any Docker container |

Hub-integrated Hub-integrated |

| Scale-to-zero |  Serverless Serverless |

Paused state Paused state |

| GPU variety | 30+ SKUs (B200, H100, A100, 4090, etc.) | Limited selection |

| Spot/community pricing |  |

|

| Open-source models |  Any Any |

|

| Closed-source models | N/A (run your own) | Via Inference Providers |

| Fine-tuning |  On GPU pods On GPU pods |

Via separate HF tools |

| Setup time | Hours (first time) | Few minutes |

| Best for | Cost-conscious teams who'll operate the serving stack themselves | Teams wanting fully managed HF Hub deployment with minimal ops |

Which Should You Choose?

Choose Puter.js if you're building a web app and want to add AI features without any backend, API keys, or GPU bills. The user-pays model is ideal for developers who don't want to worry about covering user costs as their app scales.

Choose Together AI if you need a serverless pay-per-token billing option, want to fine-tune open-source models, or need faster inference than vanilla TGI. It's the most powerful all-around option for ML-heavy teams working with open-source models.

Choose OpenRouter if your reason for using Inference Endpoints was just "one API for many models" rather than custom model hosting. OpenRouter solves the access problem without any infrastructure on either side and with automatic fallback across providers.

Choose Replicate if your focus is media generation (images, video, audio) or you need access to community-published models. Its per-second compute pricing works well for bursty, GPU-intensive workloads.

Choose RunPod if your Hugging Face bill is growing and you have the engineering capacity to operate your own serving stack. The same model running on TGI or vLLM costs a fraction on RunPod compared to managed Inference Endpoints.

Stick with Hugging Face Inference Endpoints if you need tight, native integration with the Hub, want one-click deployment of any open-source or private fine-tuned model on dedicated hardware, and value the convenience of a fully managed serving stack over per-hour cost optimization.

Conclusion

The top 5 Hugging Face alternatives are Puter.js, Together AI, OpenRouter, Replicate, and RunPod. Each takes a different approach to the problem Inference Endpoints solves, from Puter.js's zero-cost frontend integration, to Together AI's serverless and fine-tuning tools, to RunPod's raw GPU pricing. Whichever platform you choose, the best option is the one that matches your traffic shape, your operational appetite, and how your users will interact with AI in your app.

Related

- Getting Started with Puter.js

- Best Together AI Alternatives (2026)

- Top 5 OpenRouter Alternatives (2026)

- Best Replicate Alternatives (2026)

- Top 5 RunPod Alternatives (2026)

- Best fal.ai Alternatives (2026)

- User-Pays vs Traditional Model

- Top 5 Google AI Studio Alternatives (2026)

- Top 5 Vertex AI Alternatives (2026)

- Best AWS Bedrock Alternatives (2026)

- Best ElevenLabs Alternatives (2026)

- Top 5 Supabase Alternatives (2026)

- Top 5 Firebase Alternatives (2026)

- Best Appwrite Alternatives (2026)

- Top 5 Amazon S3 Alternatives (2026)

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now