Top 5 RunPod Alternatives (2026)

On this page

RunPod is a developer-friendly GPU cloud offering per-second billing, custom container deploys, and the RunPod Hub for one-click deploys of popular open-source models as your own dedicated endpoints. But even with click-deploy, you're still paying per-second for your own GPU workers, and plenty of alternatives take very different approaches—from shared per-token inference to fully abstracted, user-pays models.

In this article, you'll learn about five RunPod alternatives, how they compare, and which one might fit your project.

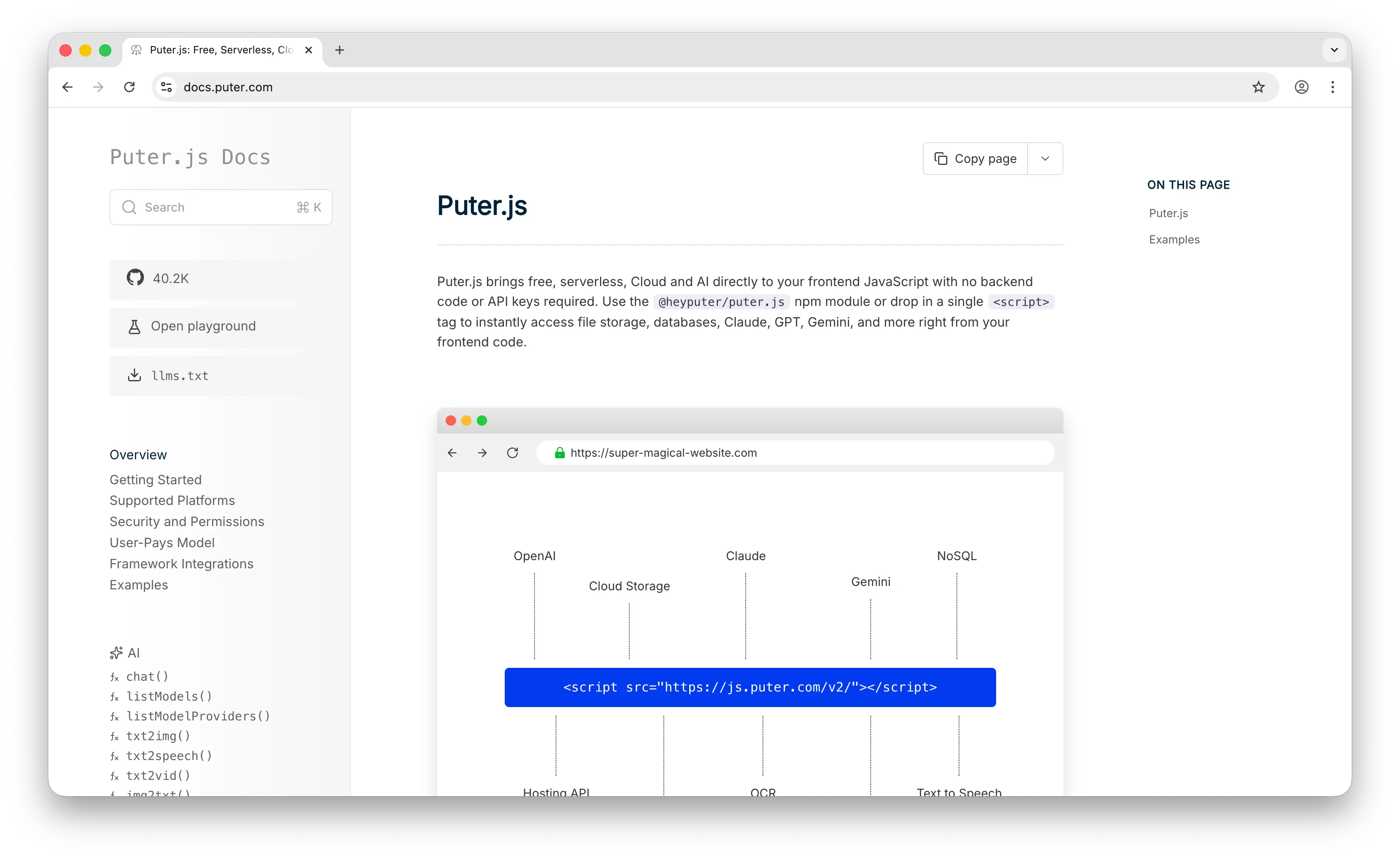

1. Puter.js

Puter.js is a JavaScript library that bundles AI, database, cloud storage, authentication, and more into a single package. It has extensive support for AI models, over 400 and growing, from providers like OpenAI, Anthropic, Google, Meta, and others.

What Makes It Different

Puter.js takes a fundamentally different approach to the question "who pays for AI?" With RunPod, you—the developer—rent the GPU, run the model, and absorb every cent of compute cost regardless of whether your app has 1 user or 100,000. Even when you click-deploy a Llama model from RunPod Hub, you're still paying for the dedicated GPU workers running it. Puter.js inverts this with the User-Pays Model: each end user covers their own AI usage through their Puter account, and the developer pays nothing, ever, regardless of scale.

The other inversion is at the access layer. RunPod hands you a dedicated endpoint—either a Hub-deployed model or your own custom container—that you provision, scale, and pay for. Puter.js hands you a single line of frontend JavaScript that calls a shared, managed pool of hundreds of pre-deployed models. There's no endpoint to provision, no cold start to engineer around, and no idle worker burning your credits. It also covers modalities that RunPod can technically handle but that you'd typically have to deploy and maintain yourself: text-to-image, image analysis, text-to-video, video analysis, OCR, speech-to-text, text-to-speech, and voice changing all ship in the box.

Key Differences from RunPod

Puter.js is not a GPU cloud. You can't run a custom fine-tuned model on a specific H100 SKU, you can't bring your own Docker container, and you don't get low-level control over the inference runtime. If you need to train a model from scratch, run a niche research workload, or squeeze every last cent out of GPU economics with spot pricing, RunPod (or Together AI) is the right tool. Puter.js is built for application developers who want AI in their app, not ML engineers who want a server.

Comparison Table

| Feature | Puter.js | RunPod |

|---|---|---|

| API key required | No | Yes |

| Pricing model | User-pays (free for devs) | Per-second GPU compute |

| Setup time | One <script> tag |

Click-deploy or custom container |

| Infrastructure management | None (shared pool) | Yes (your own endpoint) |

| Pre-built AI models | 400+ shared, ready-to-use | Available via RunPod Hub (deploy your own endpoint) |

| Chat models (GPT, Claude, Gemini, etc.) |  Extensive (incl. closed-source) Extensive (incl. closed-source) |

Open-source only (deploy via Hub) |

| Image generation |  |

Via Hub (your endpoint) |

| Video generation |  |

Via Hub (your endpoint) |

| Audio (TTS/STT) |  |

Via Hub (your endpoint) |

| OCR |  |

Via Hub (your endpoint) |

| Cold starts | Managed (none for the dev) | Sub-200ms with Flash Boot, slower otherwise |

| Custom Docker containers |  |

|

| Custom GPU selection |  |

(30+ options) (30+ options) |

| Fine-tuning own models |  |

|

| Frontend-native |  |

(backend required) (backend required) |

| Best for | Web app devs who want zero-cost AI integration | ML engineers needing full GPU control |

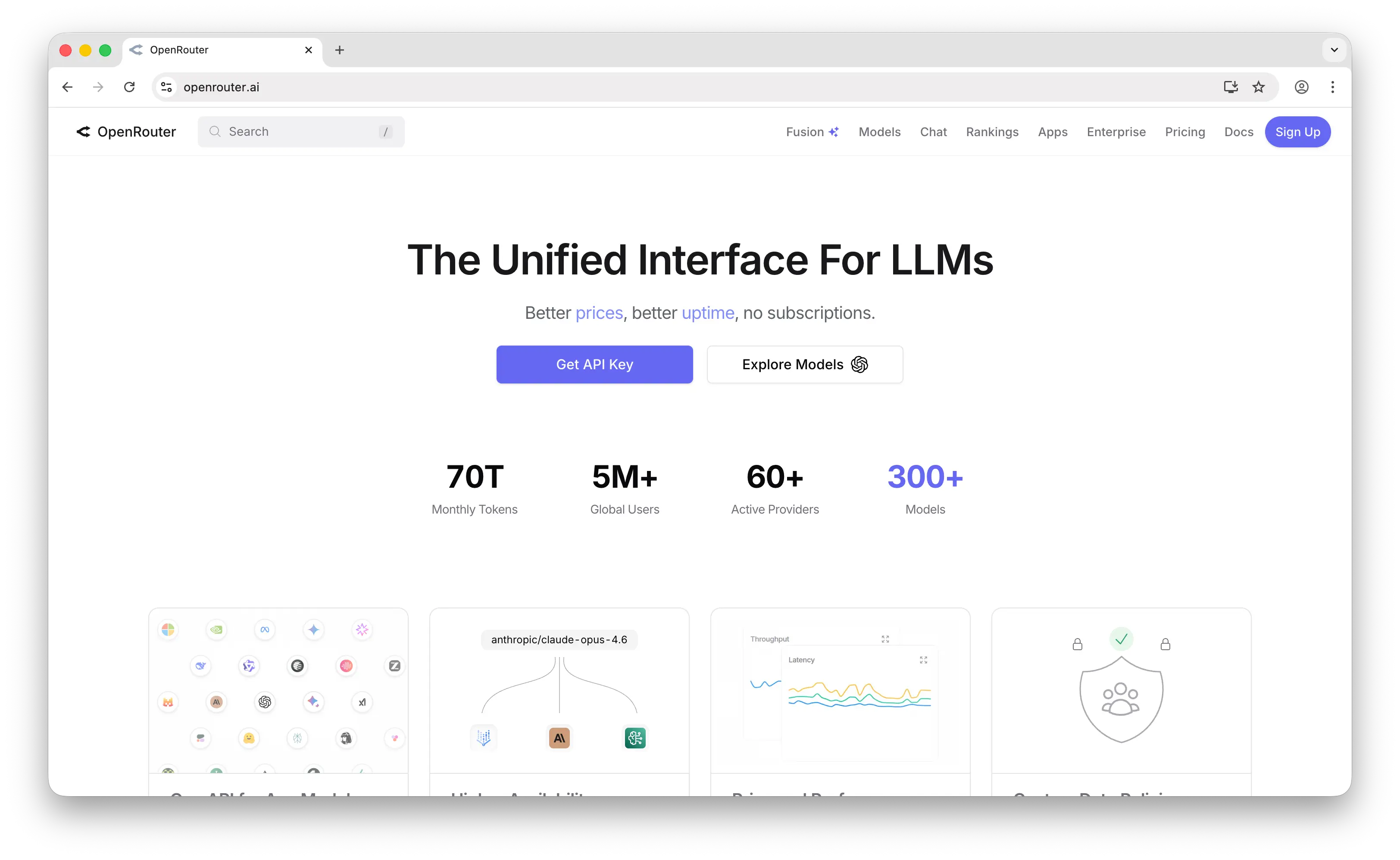

2. OpenRouter

OpenRouter is a unified API gateway that provides access to 300+ models from 60+ providers through a single OpenAI-compatible endpoint, with automatic fallback when a provider goes down.

What Makes It Different

OpenRouter takes the opposite approach to RunPod. Instead of giving you a dedicated endpoint to call, it gives you one API key and a single shared endpoint that routes to whichever provider has the model you need, billed per token. You never see hardware. You don't think about workers, GPU types, container images, or autoscaling rules. You change one parameter to switch from Claude to GPT-5 to DeepSeek.

It's also one of the few options on this list that offers strong closed-source model support (GPT, Claude, Gemini, Grok), which on RunPod would simply be impossible since those models aren't open-weight—even with the RunPod Hub, you can only deploy open-source models. Pricing is per-token at provider list prices with a 5.5% credit purchase fee, plus a free tier with 25+ models capped at around 20 requests per minute.

Key Differences from RunPod

OpenRouter is inference-only and LLM-focused. There's no GPU rental, no fine-tuning pipeline, no container deploys, no training runs. The pricing model is also fundamentally different: per-token shared inference (you pay only for tokens consumed) vs. RunPod's per-second dedicated workers (you pay for the GPU regardless of how many tokens flow through it). For low-volume LLM workloads OpenRouter is dramatically cheaper and simpler. For sustained high-volume workloads or anything that isn't "call an LLM and get text back," RunPod's economics start to win.

Comparison Table

| Feature | OpenRouter | RunPod |

|---|---|---|

| Pricing model | Per-token + 5.5% credit fee | Per-second GPU compute |

| Service type | Shared API gateway | Dedicated endpoints + GPU cloud |

| Setup time | API key + 1 endpoint | Click-deploy from Hub or custom container |

| Models available | 300+ from 60+ providers | Open-source via Hub or any model you containerize |

| Closed-source models (GPT, Claude, etc.) |  Extensive Extensive |

(open-weights only) (open-weights only) |

| Open-source models |  |

(via Hub or custom) (via Hub or custom) |

| Automatic fallback/routing |  |

|

| Cold starts | None (shared pool) | Sub-200ms with Flash Boot, slower otherwise |

| Custom Docker containers |  |

|

| Custom GPU control |  |

|

| Image generation |  |

Via Hub (your endpoint) |

| Video generation | Experimental | Via Hub (your endpoint) |

| Audio (TTS/STT) |  (newly added) (newly added) |

Via Hub (your endpoint) |

| Fine-tuning |  |

|

| Free tier | 25+ free models, 20 req/min | None (free trial credits only) |

| Best for | LLM apps wanting multi-provider routing | Teams needing dedicated endpoints + custom workloads |

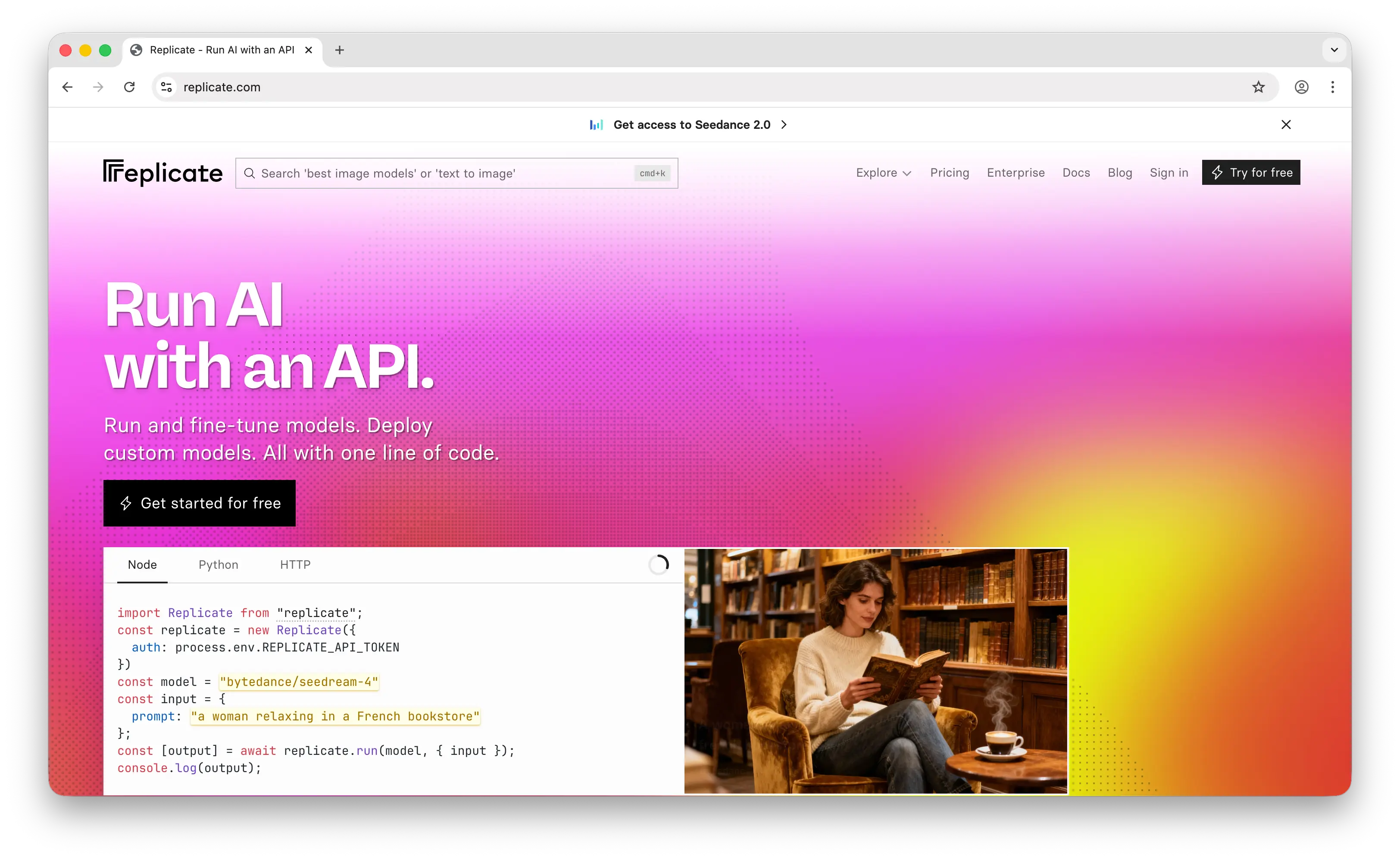

3. Replicate

Replicate is a serverless platform for running AI models, with a community model marketplace at its core. It was acquired by Cloudflare in November 2025 but continues to operate as a distinct brand.

What Makes It Different

Replicate is the closest spiritual cousin to RunPod's Serverless tier with a massive community catalog layered on top. Both platforms let you click-deploy a model and get a callable endpoint, but Replicate's catalog (50,000+ models) is roughly two orders of magnitude larger than RunPod Hub's, and the model creation flow is more open—anyone can publish a model using Cog, Replicate's open-source packaging format.

Replicate also offers a different billing wrinkle through its 100+ "official models," which are kept always-warm with predictable per-output pricing (per image, per token, per second of video) instead of raw hardware time. This gives you something closer to a per-token shared API for popular models—a layer RunPod doesn't currently offer, since even Hub deploys are billed per-second on your own workers.

Key Differences from RunPod

Replicate trades GPU control for convenience. You cannot pick your GPU, you cannot bring an arbitrary Docker container (only Cog-packaged models), and cold starts on custom model deployments can exceed 60 seconds when scaling from zero unless you pay to keep instances warm. RunPod gives you 30+ GPU SKUs, accepts any Docker image, and has Flash Boot for sub-200ms cold starts. RunPod also has Community Cloud / spot pricing for cheap batch workloads—Replicate has no equivalent. With the Cloudflare acquisition (November 2025), Replicate's models are increasingly running on Cloudflare's global edge network, which may improve latency over time but doesn't change the fundamental tradeoffs.

Comparison Table

| Feature | Replicate | RunPod |

|---|---|---|

| Pricing model | Per-second compute (or per-output for official models) | Per-second GPU compute |

| Service type | Model marketplace + serverless | GPU cloud + Serverless + Hub |

| Pre-built model catalog | 50,000+ community models | Smaller (RunPod Hub, growing) |

| Per-output / per-token pricing |  (official models) (official models) |

(always per-second) (always per-second) |

| Custom model deploy |  (via Cog only) (via Cog only) |

(any Docker container) (any Docker container) |

| Cold starts | Up to 60s+ on custom deployments | Sub-200ms with Flash Boot |

| GPU selection |  (auto-managed) (auto-managed) |

(30+ options) (30+ options) |

| Container support | Cog-packaged only | Any Docker container |

| Image generation |  Excellent Excellent |

Via Hub |

| Video generation |  Excellent Excellent |

Via Hub |

| Audio models |  |

Via Hub |

| Chat/LLM models |  (growing) (growing) |

Via Hub (Llama, Mistral, etc.) |

| Fine-tuning |  |

|

| Spot/Community pricing |  |

(significantly cheaper) (significantly cheaper) |

| GPU clusters / multi-GPU training |  |

|

| Edge deployment |  (via Cloudflare) (via Cloudflare) |

|

| Best for | Largest community catalog, media generation | Cost-conscious teams wanting GPU control |

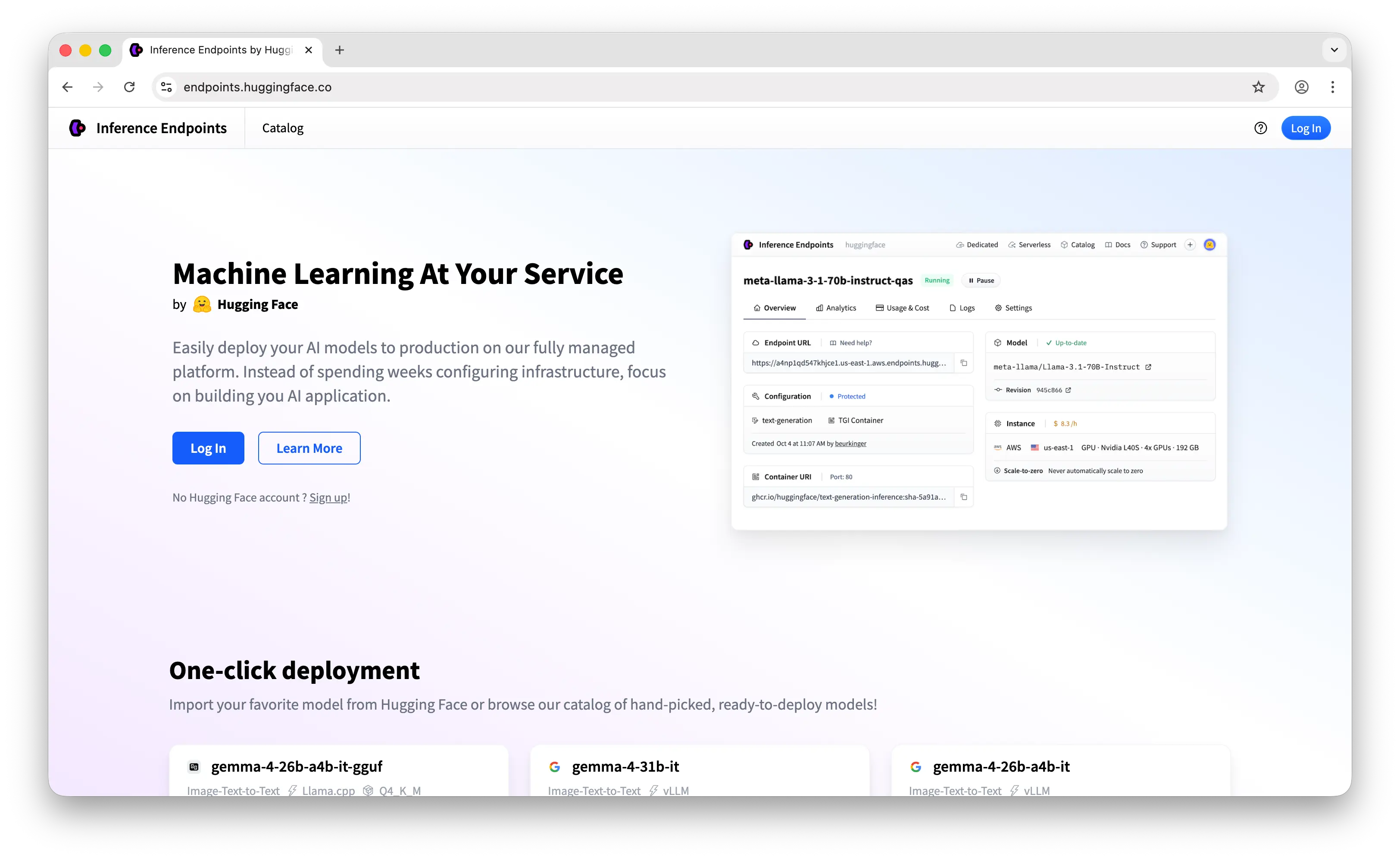

4. Hugging Face Inference Endpoints

Hugging Face Inference Endpoints is a managed deployment service that lets you turn any model on the Hugging Face Hub into a production API on dedicated AWS, Azure, or GCP infrastructure.

What Makes It Different

Hugging Face Inference Endpoints is the most natural path from research to production if your team already lives on the Hugging Face Hub. The model catalog is enormous—60,000+ Transformers, Diffusers, and Sentence Transformers models, all a few clicks away from a deployed endpoint. RunPod Hub has a similar click-deploy flow but a much smaller catalog focused on the most popular open-source models, so HF wins decisively on breadth and on the long tail of niche or research-grade models.

It also offers VPC/private endpoints for compliance-heavy use cases on AWS, Azure, or GCP, which is genuinely rare among RunPod alternatives. If you need data residency in a specific region, hardware diversity (CPU, GPU, TPU, Inferentia 2), or for inference traffic to never touch the public internet, this is one of the few platforms on this list that natively supports it.

Key Differences from RunPod

Hugging Face charges by the hour of instance uptime, not the second of active compute, and pricing for managed instances tends to run higher than RunPod for equivalent hardware (you're paying for the managed layer, the Hub integration, and the major-cloud backing). There are also no built-in spending caps or automated warnings, which means a runaway autoscaler can produce a surprise bill—RunPod has configurable spend limits and a default $80/hour cap that's easier to reason about. RunPod also has Community Cloud / spot pricing for cheap batch workloads, which HF Endpoints simply doesn't offer.

Comparison Table

| Feature | HF Inference Endpoints | RunPod |

|---|---|---|

| Pricing model | Per-hour instance pricing | Per-second compute |

| Service type | Managed deployment from Hub | GPU cloud + Serverless + Hub |

| Cloud providers | AWS, Azure, GCP | Own infrastructure (31 global regions) |

| Pre-built model catalog | 60,000+ from HF Hub | Smaller (RunPod Hub, growing) |

| Setup time | Few clicks from any Hub model | Few clicks from Hub (or custom container) |

| Auto-scaling |  |

|

| Scale-to-zero |  |

(Serverless) (Serverless) |

| Custom Docker containers |  |

|

| GPU selection | CPU, GPU, TPU, Inferentia 2 | 30+ NVIDIA GPUs |

| VPC / private endpoints |  |

Limited (Secure Cloud) |

| Hub integration (datasets, model versioning) | Native |  |

| Spending caps |  (no built-in) (no built-in) |

($80/hr default) ($80/hr default) |

| Spot / Community-tier pricing |  |

|

| Egress fees | Cloud provider rates apply | Zero |

| SOC 2 compliance |  |

(Secure Cloud) (Secure Cloud) |

| Inference Providers (managed routing) |  |

|

| Best for | HF ecosystem teams + compliance needs | Cost-conscious teams + GPU flexibility |

5. Together AI

Together AI is a full-stack AI inference and training platform. Of all the alternatives on this list, it's the most direct competitor to RunPod, offering serverless inference, dedicated endpoints, and self-serve GPU clusters under one roof.

What Makes It Different

Together AI bundles everything an AI-native team typically needs: 200+ pre-deployed open-source models behind a serverless per-token API (you call a shared endpoint, pay only for tokens), dedicated per-minute endpoints for predictable workloads, and instant GPU clusters from 8 to 4,000+ GPUs with InfiniBand interconnect for large training runs. The platform is research-led, with FlashAttention creator Tri Dao as Chief Scientist, and ships a custom inference stack that they claim is 4x faster than vLLM.

This is the most important difference from RunPod: even when you click-deploy Llama 3 from RunPod Hub, you're paying per-second of GPU time on your own dedicated workers. On Together AI's serverless inference, you call a shared endpoint and pay only for tokens consumed—no idle workers, no warm-up costs, no autoscaling configuration. Together also offers a real fine-tuning pipeline (LoRA + full parameter), a Batch Inference API at 50% off for async workloads, and top-tier hardware including H200, B200, and GB200 NVL72.

Key Differences from RunPod

Together AI focuses on managed open-source inference rather than arbitrary container workloads. You can deploy a fine-tuned model, but you cannot bring an arbitrary Docker container the way you can on RunPod—if your workload is "run this random Python script on a 4090," RunPod is the right tool. Together also doesn't offer Community Cloud / spot pricing, so for cost-sensitive batch jobs RunPod typically wins on raw economics. The flip side: for low-to-medium traffic LLM workloads, Together's per-token serverless is dramatically cheaper than spinning up a dedicated RunPod endpoint and paying for idle time.

Comparison Table

| Feature | Together AI | RunPod |

|---|---|---|

| Pricing model | Per-token (serverless) + per-minute (dedicated) | Per-second GPU compute |

| Service type | Shared inference + dedicated endpoints + GPU clusters | Dedicated endpoints + GPU cloud + Hub |

| Pre-built models | 200+ open-source (shared per-token) | Open-source via Hub (your endpoint) |

| Per-token shared inference |  |

|

| Closed-source models |  |

|

| Inference optimization | Custom stack (claimed 4x vs vLLM) | Standard runtime |

| Serverless inference |  (per-token) (per-token) |

(per-second) (per-second) |

| Dedicated endpoints |  (per-minute) (per-minute) |

(per-second) (per-second) |

| GPU clusters |  (8 to 4,000+ GPUs, InfiniBand) (8 to 4,000+ GPUs, InfiniBand) |

|

| Top-tier hardware | H100, H200, B200, GB200 NVL72 | H100, H200, B200, and more |

| Fine-tuning |  (LoRA + full param) (LoRA + full param) |

|

| Batch inference |  (50% discount) (50% discount) |

DIY |

| Custom Docker containers |  |

|

| Spot / Community-tier pricing |  |

|

| Image generation |  |

Via Hub |

| Audio models |  |

Via Hub |

| Embeddings / Reranking |  |

Via Hub |

| Best for | Open-source LLM apps at scale | Custom workloads + GPU/container control |

Which Should You Choose?

Choose Puter.js if you're building a web app and want to add AI features without renting GPUs, managing containers, or paying for inference. The user-pays model is ideal for developers who don't want to cover their users' AI costs and want to ship in minutes instead of days.

Choose OpenRouter if your workload is purely LLM-based and you want access to closed-source models like GPT and Claude alongside open-source options, all behind a single OpenAI-compatible endpoint with automatic provider fallback.

Choose Replicate if your focus is media generation (images, video, audio) or you need access to a massive community-published model catalog without building your own containers. Its compute-time pricing model works well for GPU-intensive media workloads.

Choose Hugging Face Inference Endpoints if your team already lives on the Hugging Face Hub or you need VPC-private deployments on AWS, Azure, or GCP. It's the cleanest path from a Hub model to a production API with compliance guarantees.

Choose Together AI if you need to fine-tune open-source models, run inference at high scale with optimized performance, or spin up dedicated GPU clusters for training. It's the most powerful alternative for ML-heavy teams that want a managed but flexible stack.

Stick with RunPod if you need raw GPU control, want to bring your own arbitrary Docker container, want to click-deploy popular open-source models from RunPod Hub as your own dedicated endpoint, care about extracting the lowest possible $/GPU-hour through Community Cloud and spot pricing, or are running custom workloads that don't fit into shared per-token inference APIs.

Conclusion

The top 5 RunPod alternatives are Puter.js, OpenRouter, Replicate, Hugging Face Inference Endpoints, and Together AI. Each takes a different approach to the same underlying problem of running AI models in production—from Puter.js's zero-cost frontend integration, to OpenRouter's API-gateway abstraction, to Together AI's full-stack inference platform. Whichever platform you choose, the best option is the one that fits your stack, your budget, and how your users will interact with AI in your app.

Related

- Getting Started with Puter.js

- Top 5 OpenRouter Alternatives (2026)

- Best Replicate Alternatives (2026)

- Best Together AI Alternatives (2026)

- Best fal.ai Alternatives (2026)

- User-Pays vs Traditional Model

- Top 5 Google AI Studio Alternatives (2026)

- Top 5 Vertex AI Alternatives (2026)

- Best AWS Bedrock Alternatives (2026)

- Best ElevenLabs Alternatives (2026)

- Top 5 Supabase Alternatives (2026)

- Top 5 Firebase Alternatives (2026)

- Best Appwrite Alternatives (2026)

- Top 5 Amazon S3 Alternatives (2026)

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now