Top 5 DeepInfra Alternatives (2026)

On this page

DeepInfra is hard to beat for high-throughput open-source inference, 190+ models on its own GPU fleet, low per-token prices, cached-prompt billing, and enterprise compliance (SOC 2, ISO 27001, HIPAA). But it's not the right shape for every project: closed-source frontier models, frontend-only integration, custom or fine-tuned models, raw GPU access, and media generation at scale all sit outside what it's designed for. This article walks through five DeepInfra alternatives that each fill a different gap.

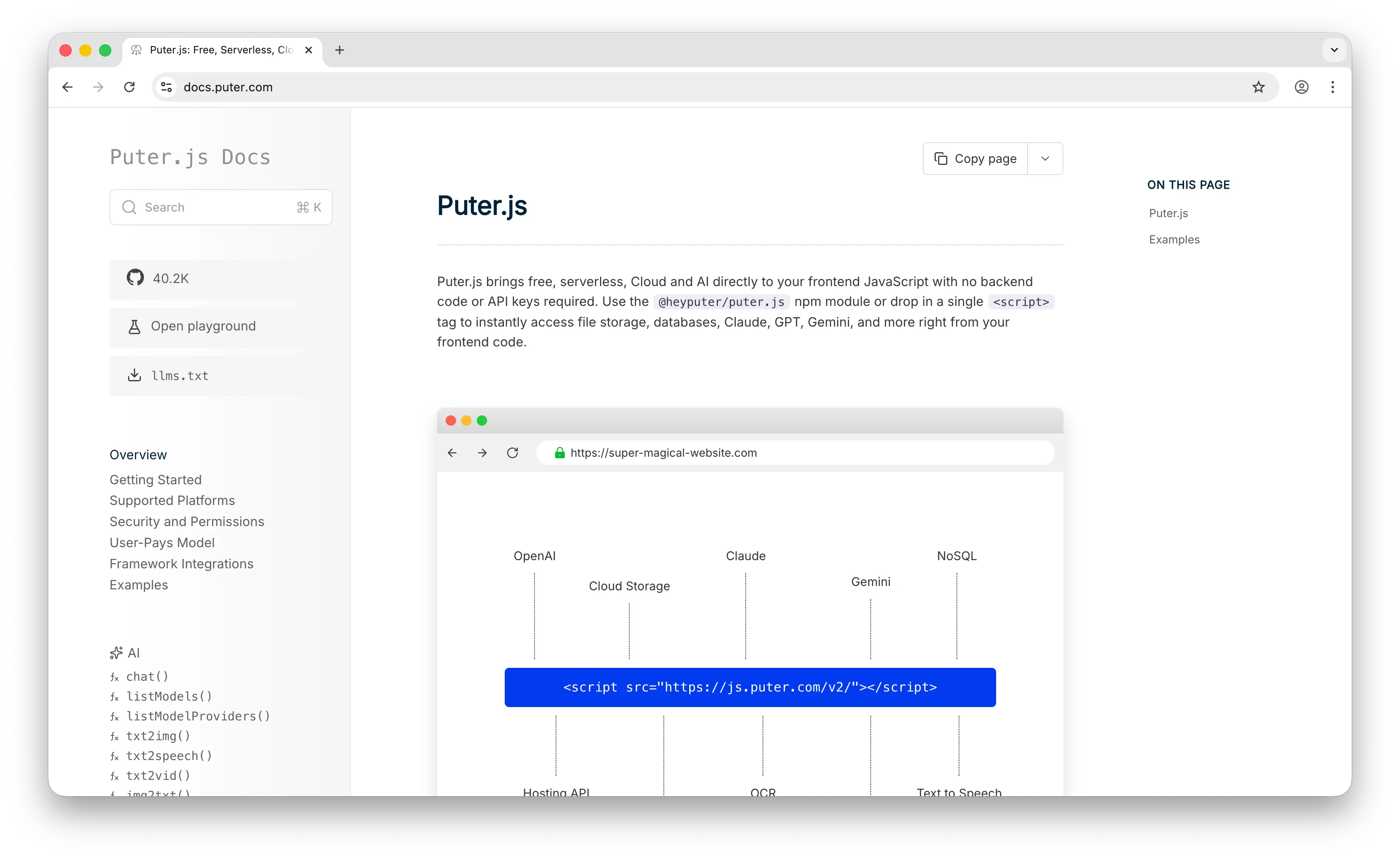

1. Puter.js

Puter.js is a JavaScript library that bundles AI, database, cloud storage, authentication, and more into a single package. It has extensive support for AI models, over 400 and growing, from providers like OpenAI, Anthropic, Google, Meta, and others.

What Makes It Different

DeepInfra's business model is built on per-token pricing: the cheaper they make a million tokens, the more competitive they are. Puter.js sidesteps that economy entirely with its User-Pays Model, where each end user covers their own AI usage through their Puter account. The developer's bill doesn't scale with usage because the developer isn't billed at all. There's no API key to manage, no backend to host, no concurrency limit to plan around, and no cached-prompt math to optimize, you drop a <script> tag in your HTML and call puter.ai.chat().

The model catalog reflects a different priority too. DeepInfra is open-source-first and serves a curated set of around 190 models optimized for cost-efficient throughput. Puter.js exposes 400+ models, including the closed-source frontier ones DeepInfra mostly doesn't offer: GPT-5.x, Claude, Gemini, Grok, alongside the same open-source families. It also covers multimodal ground DeepInfra doesn't try to compete on, text-to-video, video analysis, voice changing, OCR, plus bundled cloud storage, key-value database, auth, and static hosting in the same SDK.

Key Differences from DeepInfra

The two are aimed at different builders. DeepInfra is server-side infrastructure for teams running their own production inference, with the compliance certifications and 200-concurrent-request ceiling that come with that role. Puter.js is frontend-first, designed for web apps where the user is in front of a browser and can authenticate against their own Puter account. It works in Node.js but the user-pays mechanic only really makes sense when there's a user present. Puter also doesn't currently offer embeddings models, and it doesn't carry DeepInfra's first-party SOC 2 / ISO 27001 / HIPAA stamps, which matters if you're selling into regulated industries.

Comparison Table

| Feature | Puter.js | DeepInfra |

|---|---|---|

| API key required | No | Yes |

| Pricing model | User-pays (free for devs) | Pay-per-token (dev pays) |

| Backend required | No (frontend SDK) | Yes (server-side calls) |

| Chat models |  400+ 400+ |

190+ 190+ |

| Closed-source models |  Extensive (GPT, Claude, Gemini, Grok) Extensive (GPT, Claude, Gemini, Grok) |

Limited |

| Open-source models |  |

Extensive Extensive |

| Image generation |  |

|

| Video generation |  |

Limited |

| Audio (TTS/STT) |  |

|

| Voice changing |  |

|

| Embeddings |  |

|

| Storage & auth bundled |  |

|

| Cached prompt pricing | N/A (user-pays) |  |

| Enterprise compliance | Limited |  SOC 2, ISO 27001, HIPAA SOC 2, ISO 27001, HIPAA |

| Model update speed | Fast | Fast |

| Best for | Frontend/web app devs who want zero-cost AI integration | Backend teams running open-source models at production scale |

2. OpenRouter

OpenRouter is a unified API gateway that provides access to 300+ models from 60+ providers, including DeepInfra itself, through a single OpenAI-compatible endpoint.

What Makes It Different

OpenRouter is fundamentally a routing layer, not infrastructure. Instead of running its own GPUs like DeepInfra, it forwards requests to whichever upstream provider has the best price, latency, or availability for the model you request. This gives you one API key and one bill across every major lab, including closed-source frontier models like Claude, GPT, Gemini, and Grok that DeepInfra mostly doesn't offer.

It also has automatic fallback routing, so if one provider errors or rate-limits you, OpenRouter retries the request on another provider that hosts the same model. You're only billed for the successful run. There's also a free tier with rate-limited access to 25+ free models, useful for prototyping.

Key Differences from DeepInfra

OpenRouter charges a 5.5% platform fee on credit card purchases (5% on crypto), and some models, notably Anthropic's, reportedly carry an additional markup over direct provider pricing. DeepInfra, by contrast, owns its inference stack, so its per-token price is the price; there's no aggregator margin. OpenRouter also doesn't offer cached prompt pricing across providers in a unified way, and its routing adds a small latency hop that DeepInfra's direct serving doesn't have. If you've already settled on a specific open-source model and want the lowest predictable price, DeepInfra usually wins; if you want breadth and provider-agnostic flexibility, OpenRouter wins.

Comparison Table

| Feature | OpenRouter | DeepInfra |

|---|---|---|

| Pricing model | Pay-as-you-go (5.5% credit fee) | Pay-per-token (direct) |

| Markup | 5.5% on credit + per-model variance | At cost (own infra) |

| Owns infrastructure |  (router) (router) |

(8 US DCs) (8 US DCs) |

| Open-source models |  |

Extensive (190+) Extensive (190+) |

| Closed-source models |  Extensive (Claude, GPT, Gemini, Grok) Extensive (Claude, GPT, Gemini, Grok) |

Limited |

| Number of models | 300+ from 60+ providers | 190+ |

| Fallback/routing |  Automatic Automatic |

|

| BYOK support |  |

|

| Free tier |  (rate-limited) (rate-limited) |

|

| Cached prompt pricing | Limited |  |

| Audio (TTS/STT) |  (recently added) (recently added) |

|

| Image generation | Limited |  |

| Function calling |  |

|

| Enterprise compliance |  (custom data policies) (custom data policies) |

SOC 2, ISO 27001, HIPAA SOC 2, ISO 27001, HIPAA |

| Model update speed | Fast | Fast |

| Best for | Devs wanting one key across many providers, including closed-source | Teams optimizing open-source inference cost at scale |

3. Replicate

Replicate is a pay-as-you-go platform for running AI models that grew in popularity alongside the Stable Diffusion wave. It was acquired by Cloudflare in November 2025 but continues to operate as a distinct brand.

What Makes It Different

Replicate has one of the largest model catalogs in the industry, with over 50,000 production-ready models, including models from individual developers and the community, not just big labs. It has excellent media generation support (image, video, audio) with deep customizability, far beyond what DeepInfra offers in those categories. Models are containerized using Cog, Replicate's open-source packaging format, so you can publish and serve your own custom models, something DeepInfra's curated catalog doesn't allow.

Replicate's pricing is based on compute time, you're charged per second of GPU runtime rather than per token. For media generation workloads like image, video, and audio, where token-based pricing doesn't apply, this is often more natural and cost-effective. About 100 "official models" are also priced by output (per image, per second of video, etc.) and stay always-warm to avoid cold starts.

Key Differences from DeepInfra

DeepInfra is text-first and optimized for high-throughput LLM serving, with low per-token rates and cached prompt pricing built around that workload. Replicate's per-second compute billing is a different shape, it can include cold-boot time for community models, which means a model that idled out adds compute charges to the next request. With the Cloudflare acquisition, Replicate's models will increasingly run on Cloudflare's global network, which should reduce some of those rough edges over time. DeepInfra also has stronger first-party enterprise compliance positioning out of the box.

Comparison Table

| Feature | Replicate | DeepInfra |

|---|---|---|

| Pricing model | Per-second compute (or per-output for official models) | Per-token |

| Cold start charges | Possible on community models |  |

| Number of models | 50,000+ (community + official) | 190+ curated |

| Open-source LLMs |  (growing) (growing) |

Extensive Extensive |

| Closed-source models | Some (via partnerships) | Limited |

| Image generation |  Excellent Excellent |

|

| Video generation |  Excellent Excellent |

Limited |

| Audio models |  |

|

| Embeddings | Limited |  |

| Custom model deployment |  via Cog via Cog |

|

| Cached prompt pricing |  |

|

| OpenAI-compatible API | Partial |  |

| Edge/global network |  (via Cloudflare) (via Cloudflare) |

(US data centers) (US data centers) |

| Enterprise compliance | Inherits Cloudflare's |  SOC 2, ISO 27001, HIPAA SOC 2, ISO 27001, HIPAA |

| Best for | Media generation, community models, custom model hosting | High-throughput LLM inference with predictable token costs |

4. Together AI

Together AI is a full-stack AI inference and training platform, and the most direct competitor to DeepInfra on this list. Both offer serverless inference for open-source models with OpenAI-compatible APIs and pay-per-token pricing.

What Makes It Different

Together AI is not just an inference API, it's an AI infrastructure platform. Beyond serverless inference, it offers first-class LoRA fine-tuning on every major Llama, Mistral, and Qwen size (including Llama 3.1 405B), dedicated GPU endpoints with reserved compute, GPU clusters by the hour (H100 at $3.49/hr, B200 at $7.49/hr), and batch inference at up to 50% off for async workloads. DeepInfra is serverless inference only; if you need to fine-tune or rent raw GPUs, you'd need a second vendor.

Together's research team is also a real differentiator, the platform originated FlashAttention, ThunderKittens, and more recently the ATLAS speculative decoding system, which it uses to claim roughly 2x faster inference than the next fastest provider on several models. New users get $25 in free credits, and the startup accelerator offers $15K–$50K in credits for qualifying companies.

Key Differences from DeepInfra

DeepInfra tends to be slightly cheaper on small models (Llama 8B at $0.06/M vs Together's $0.10–0.18/M) and offers cached-input pricing on select models, which Together generally does not. DeepInfra's own benchmarks emphasize cost efficiency on high-throughput agentic workloads, while Together leans on its speed-per-token advantage and the breadth of its training stack. If your only need is the cheapest open-source token serving, DeepInfra often edges out; if you also need to fine-tune or run dedicated workloads, Together's all-in-one platform saves you from stitching multiple vendors together.

Comparison Table

| Feature | Together AI | DeepInfra |

|---|---|---|

| Pricing model | Per-token (serverless), per-minute (dedicated), per-hour (GPU) | Per-token |

| Small model floor price | ~$0.10–0.18/M tokens | ~$0.06/M tokens |

| Open-source models |  Extensive (200+) Extensive (200+) |

Extensive (190+) Extensive (190+) |

| Closed-source models |  |

Limited |

| Fine-tuning (LoRA) |  Up to Llama 3.1 405B Up to Llama 3.1 405B |

|

| Dedicated endpoints |  |

|

| GPU clusters (hourly) |  H100, H200, B200 H100, H200, B200 |

|

| Batch inference |  Up to 50% off Up to 50% off |

|

| Cached prompt pricing |  (mostly) (mostly) |

|

| Image generation |  |

|

| Audio models |  |

|

| Embeddings |  |

|

| Reranking models |  |

Limited |

| Free credit | $25 + up to $50K via accelerator |  |

| Inference speed claim | ~2x next-fastest provider | Optimized on own H100/B200 |

| Enterprise compliance |  |

SOC 2, ISO 27001, HIPAA SOC 2, ISO 27001, HIPAA |

| Best for | Teams needing inference + fine-tuning + raw GPUs in one place | Teams optimizing pure open-source inference cost at scale |

5. RunPod

RunPod is a GPU cloud platform focused exclusively on AI and machine learning workloads, offering raw GPU rentals, serverless GPU endpoints, and multi-node clusters across 31 global regions.

What Makes It Different

RunPod is a different category from DeepInfra. Where DeepInfra is a managed inference API (you call a model and pay per token), RunPod is raw GPU access (you rent the hardware and run whatever stack you want). You bring your own Docker container, deploy any model, and pay by the second of GPU time, RTX 4090s start at $0.34/hr, H100s at around $1.99/hr on Community Cloud. There are no token quotas, no concurrency caps from a vendor, and no curated model menu, you control the whole inference server.

RunPod splits its infrastructure into Community Cloud (cheaper, sourced from vetted GPU providers) and Secure Cloud (SOC 2 Type II, single-tenant, enterprise data centers). Its serverless GPU endpoints offer sub-200ms cold starts and scale-to-zero billing, the closest analog to DeepInfra's experience, but you still build the worker yourself. There are zero egress fees, and Instant Clusters let you spin up multi-node training jobs with up to 3,200 Gbps interconnect.

Key Differences from DeepInfra

The tradeoff is convenience for control and cost. DeepInfra gives you an OpenAI-compatible URL and a price-per-token; you don't touch infrastructure. RunPod gives you the GPU and expects you to ship the inference server (vLLM, TGI, ComfyUI, your own code), handle cold starts, manage model files, and monitor throughput. For most teams just calling a Llama or DeepSeek model, DeepInfra is faster to ship with and often cheaper per token at low volume. For teams running custom models, fine-tuned variants, training jobs, or anything where token-based pricing doesn't fit, RunPod typically costs 60–90% less than hyperscalers and gives you flexibility DeepInfra can't.

Comparison Table

| Feature | RunPod | DeepInfra |

|---|---|---|

| Pricing model | Per-second GPU time | Per-token |

| Sample pricing | RTX 4090 ~$0.34/hr, H100 ~$1.99/hr | Llama 8B ~$0.06/M tokens |

| Managed inference API | Serverless endpoints (DIY worker) |  Native Native |

| OpenAI-compatible API |  (you build it) (you build it) |

|

| Curated model catalog |  (BYO Docker) (BYO Docker) |

190+ 190+ |

| Custom/private models |  Full control Full control |

|

| Training/fine-tuning |  |

|

| Multi-GPU clusters |  Up to 3,200 Gbps Up to 3,200 Gbps |

|

| Cold start time | ~200ms (serverless) | ~0 (always warm) |

| Egress fees | Zero | Zero (within plan) |

| Number of GPU SKUs | 30+ (RTX 4090, A100, H100, H200, B200) | Managed (H100, A100, B200) |

| Regions | 31 global | 8 US data centers |

| Enterprise compliance |  SOC 2 (Secure Cloud) SOC 2 (Secure Cloud) |

SOC 2, ISO 27001, HIPAA SOC 2, ISO 27001, HIPAA |

| Cached prompt pricing |  (DIY) (DIY) |

|

| Best for | Custom models, training, full infra control, lowest GPU prices | Plug-and-play inference on popular open-source models |

Which Should You Choose?

Choose Puter.js if you're building a web app and want to add AI features without any backend or API costs. The user-pays model is ideal for developers who don't want to worry about per-token bills scaling with their user base, and the bundled storage, auth, and database make it a complete stack for client-side apps.

Choose OpenRouter if you need access to closed-source models (Claude, GPT, Gemini, Grok) alongside open-source ones, want automatic fallback across providers, and value one bill across the entire ecosystem more than the lowest possible per-token price.

Choose Replicate if your focus is media generation (images, video, audio) or you need access to community-published models. Its compute-time pricing model works well for GPU-intensive workloads, and Cog lets you publish your own models in a way DeepInfra doesn't.

Choose Together AI if you need to fine-tune open-source models, run batch workloads, or want dedicated GPU endpoints in the same platform as your serverless inference. It's the closest direct competitor to DeepInfra and the strongest option for ML-heavy teams.

Choose RunPod if you need raw GPU access at the lowest possible price, want to run custom models or training jobs, and are comfortable building your own inference server. It's not a drop-in DeepInfra replacement, it's the build-it-yourself path.

Stick with DeepInfra if you mainly need fast, cheap, OpenAI-compatible inference on popular open-source models, with enterprise compliance and cached prompt pricing already in place. For high-throughput agentic workloads on Llama, DeepSeek, Qwen, and similar models, it's hard to beat on price per token.

Conclusion

The top 5 DeepInfra alternatives are Puter.js, OpenRouter, Replicate, Together AI, and RunPod. Each takes a different approach to the inference problem, from Puter.js's zero-cost frontend integration to OpenRouter's provider-agnostic routing to RunPod's raw GPU rentals. Whichever platform you choose, the best option is the one that fits your stack, your budget, and how your users will interact with AI in your app.

Related

- Getting Started with Puter.js

- Top 5 OpenRouter Alternatives (2026)

- Best Replicate Alternatives (2026)

- Best Together AI Alternatives (2026)

- Top 5 RunPod Alternatives (2026)

- Best fal.ai Alternatives (2026)

- Top 5 Google AI Studio Alternatives (2026)

- Top 5 Vertex AI Alternatives (2026)

- Best AWS Bedrock Alternatives (2026)

- Best ElevenLabs Alternatives (2026)

- User-Pays vs Traditional Model

- Top 5 Supabase Alternatives (2026)

- Top 5 Firebase Alternatives (2026)

- Best Appwrite Alternatives (2026)

- Top 5 Amazon S3 Alternatives (2026)

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now