Top 5 Modal Alternatives (2026)

On this page

Modal turned serverless GPU compute into something that feels like writing a normal Python script. Decorate a function with @app.function(gpu="H100"), push it, and Modal spins up containers in seconds, scales them out, and bills you per second of actual usage. That model works beautifully for ML engineers running custom inference, fine-tuning, or batch jobs, but it's not the right fit for every team. Some workloads don't need a Python runtime at all, some need cheaper raw GPUs, and some don't need infrastructure in the first place.

This article walks through five Modal alternatives, what each one does differently, and where each one wins.

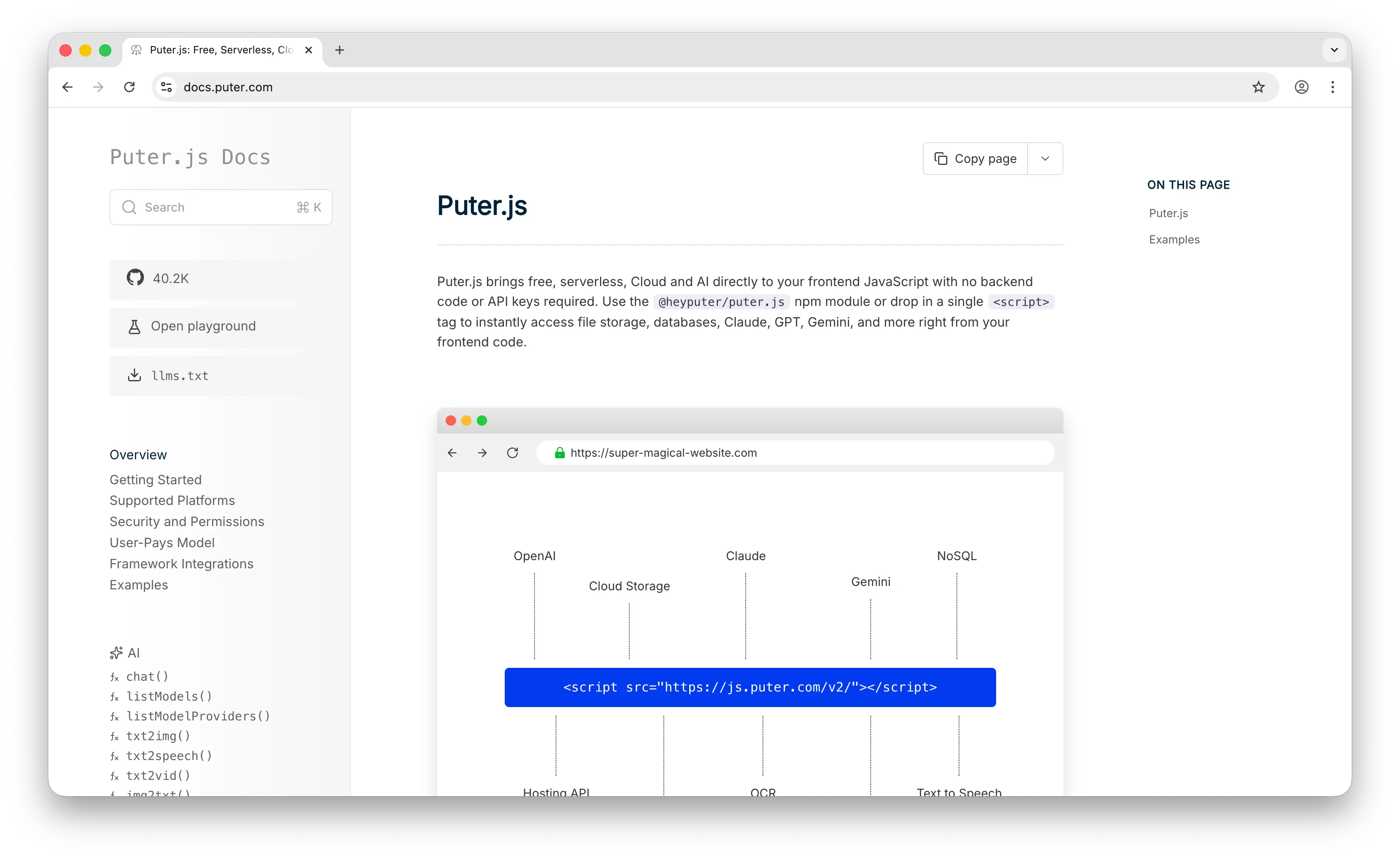

1. Puter.js

Puter.js is a JavaScript library that bundles AI, database, cloud storage, authentication, and more into a single package. It has extensive support for AI models, over 400 and growing, from providers like OpenAI, Anthropic, Google, Meta, and others.

What Makes It Different

The core idea is the User-Pays Model. When someone uses your Puter-powered app, their own Puter account is charged for any AI calls, storage, or other cloud resources they consume, not yours. You ship the code, they bring the credits. As a developer, your infrastructure bill is $0 whether the app has ten users or ten million, and there are no API keys to rotate, rate-limit, or leak. Compare that with Modal, where the developer pays for every second of GPU runtime, with H100s running around $4.50/hr and regional non-preemptible multipliers that can push real costs to 3.75x the advertised rate.

Beyond the billing model, Puter.js is a different shape of product. Modal hands you raw Python compute and expects you to build the AI layer on top; Puter.js hands you finished AI capabilities. A single puter.ai.chat(...) call works directly from the browser with no backend, and the same SDK covers text generation, image generation, OCR, speech-to-text, text-to-speech, video generation, and voice changing. On Modal, every one of those would be a container you write, an endpoint you maintain, and a GPU bill you absorb.

Key Differences from Modal

Puter.js is primarily designed for web apps running on the frontend, while Modal is a backend compute platform for running arbitrary Python code on GPUs. That makes Modal suitable for things Puter cannot do: training jobs, custom inference servers built around your own model weights, batch data pipelines, and fine-tuning. If you need full control over the Python runtime and the GPU, Modal is the right tool. If you just need AI features in a product, Puter.js skips the infrastructure layer entirely.

Comparison Table

| Feature | Puter.js | Modal |

|---|---|---|

| Primary abstraction | Frontend SDK | Serverless Python functions |

| API key required | No | Yes |

| Pricing model | User-pays (free for devs) | Per-second GPU/CPU billing |

| Cost to developer | $0 at any scale | Pays for all compute (H100 ~$4.50/hr) |

| Free tier |  Unlimited for devs Unlimited for devs |

$30/mo credits |

| Pre-hosted models |  400+ 400+ |

(BYO model) (BYO model) |

| Custom Python code |  |

|

| Custom Docker images |  |

|

| Cold starts | None (managed APIs) | 2–4 seconds |

| Audio (TTS/STT) |  |

DIY |

| Image generation |  |

DIY |

| Video generation |  |

DIY |

| OCR |  |

DIY |

| Cloud storage |  |

Limited (Volumes) |

| Auth & database |  |

|

| Fine-tuning |  |

|

| Best for | Frontend devs adding AI to web apps at zero cost | ML engineers running custom Python workloads on GPUs |

2. RunPod

RunPod is a GPU cloud platform that offers both serverless endpoints and persistent GPU pods, with one of the widest hardware catalogs in the industry and aggressive pricing.

What Makes It Different

RunPod runs across 30+ regions with 32+ GPU types, from RTX 3090s at $0.19/hr in Community Cloud up to H100s and B200s for production inference. Unlike Modal, which is serverless-only, RunPod lets you choose between per-second serverless workers (Flex Workers that scale to zero, Active Workers that stay warm at a 20–30% discount) and persistent pods that you can SSH into for long-running training jobs.

Pricing is the headline. RunPod's H100 Serverless lands around $2.50/hr versus Modal's ~$4.50/hr, and RunPod charges zero data egress fees, where Modal doesn't publish egress pricing at all. FlashBoot, RunPod's cold-start optimization, delivers sub-200ms cold starts on roughly 48% of serverless requests.

Key Differences from Modal

RunPod is more infrastructure-first: you deploy Docker containers and configure endpoints, where Modal lets you decorate a Python function and skip the container plumbing entirely. The DX gap is real, Modal feels closer to writing a script, RunPod feels closer to managing infrastructure. RunPod also splits between Community Cloud (cheap, can be preempted) and Secure Cloud (~47% premium, enterprise reliability), so you trade transparency for cost flexibility. For pure Python ergonomics, Modal wins; for raw GPU price-performance and the option of long-running pods, RunPod wins.

Comparison Table

| Feature | RunPod | Modal |

|---|---|---|

| Deployment model | Docker containers + Python SDK | Python decorators (@app.function) |

| Pricing model | Per-second (serverless) / per-minute (pods) | Per-second |

| H100 pricing | ~$2.50/hr (Serverless) | ~$4.50/hr |

| Regional multipliers |  Flat pricing Flat pricing |

Up to 2.5x for non-US regions |

| Egress fees |  Zero Zero |

Not published |

| Serverless endpoints |  |

|

| Persistent GPU pods |  |

|

| SSH access to GPUs |  |

|

| Cold starts | <200ms for 48% of requests (FlashBoot) | 2–4 seconds typical |

| Scale to zero |  (Flex Workers) (Flex Workers) |

|

| GPU variety | 32+ types (RTX 3090 to B200) | T4 to H100/B200 |

| Spot/preemptible pricing |  (Community Cloud) (Community Cloud) |

(preemptible discount) (preemptible discount) |

| Region count | 30+ | Multi-region (fewer choices) |

| Developer experience | Container-first, more YAML | Python-native, minimal config |

| Best for | Cost-sensitive teams wanting GPU variety and persistent pods | Python-first teams optimizing for DX over price |

3. OpenRouter

OpenRouter is a unified LLM API gateway that provides access to 400+ models from 60+ providers through a single OpenAI-compatible endpoint.

What Makes It Different

OpenRouter is not a compute platform. It doesn't run GPUs, doesn't host model weights, and doesn't ask you to deploy anything. It's a routing and aggregation layer that takes your API call and dispatches it to the right upstream provider (Anthropic, OpenAI, Google, DeepSeek, Meta, xAI, Mistral, and dozens more), with automatic failover when a provider goes down and :nitro variants for speed-optimized routing.

Pricing is per-token, passed through from each provider with a small margin (5.5% on credit purchases). There's no GPU rental, no cold starts, no autoscaling to think about. Adding ~15ms of routing latency is the entire technical overhead.

Key Differences from Modal

These two products solve completely different problems. Modal asks "where do I run this model?", OpenRouter asks "which provider should serve this request?". If your workload is calling existing LLMs, OpenRouter eliminates all the infrastructure work Modal exists to manage. If your workload is custom inference, fine-tuning, batch data processing, or anything that needs a Python function running on a GPU, OpenRouter cannot help and Modal is what you want. Many teams end up using both: Modal for custom workloads, OpenRouter for off-the-shelf LLM calls.

Comparison Table

| Feature | OpenRouter | Modal |

|---|---|---|

| Primary abstraction | LLM API gateway | Serverless GPU compute |

| What you deploy | Nothing | Python code + dependencies |

| Pricing model | Per-token (5.5% credit fee) | Per-second GPU/CPU |

| Free tier | Free models (rate-limited) | $30/mo credits |

| Model access | 400+ LLMs across 60+ providers | Any model you can run |

| Custom code |  |

|

| Custom model weights |  |

|

| Closed-source LLMs |  Extensive Extensive |

DIY (no direct access) |

| Image generation |  |

DIY |

| Audio (TTS/STT) |  |

DIY |

| Embeddings |  |

DIY |

| Cold starts | None (~15ms routing overhead) | 2–4 seconds |

| Fine-tuning |  |

|

| Batch processing |  |

|

| Automatic failover |  |

|

| BYOK support |  |

N/A |

| Best for | Apps calling existing LLMs with simple multi-provider routing | Custom Python workloads on dedicated GPUs |

4. Together AI

Together AI is a full-stack AI inference and training platform. It offers access to hundreds of open-source models through pre-hosted serverless endpoints, dedicated endpoints, and bare GPU clusters.

What Makes It Different

Together AI is built around inference research. It developed FlashAttention-3, ATLAS speculative decoding, and Mamba-3, and claims roughly 2x faster serverless inference than the next-best provider on some open-source models. Where Modal expects you to bring your own inference stack (vLLM, TGI, custom kernels), Together has 200+ models already running on their optimized stack, ready to call via API at per-token pricing (e.g. Llama 4 Maverick at $0.27/$0.85 per M input/output tokens).

Together also offers three deployment tiers in one platform: serverless tokens for ad-hoc use, dedicated endpoints with reserved GPUs for guaranteed latency, and GPU clusters (H100 at $3.49/hr, H200 at $4.19/hr, B200 at $7.49/hr) for custom workloads. Fine-tuning and batch inference (up to 30B tokens, 50% discount) are built in.

Key Differences from Modal

Together AI is opinionated about how models are served, Modal is opinionated about how Python is deployed. If your model is on Together's catalog, you skip all the inference-server engineering Modal expects you to handle. The flip side is flexibility: Modal will run any code on any model with any serving framework, while Together is more constrained to the inference patterns its platform optimizes for. Modal is also generally cheaper for raw GPU rental when you're already comfortable building the serving stack yourself.

Comparison Table

| Feature | Together AI | Modal |

|---|---|---|

| Primary abstraction | Hosted inference + GPU rental | Serverless Python functions |

| Pre-hosted models |  200+ open-source 200+ open-source |

|

| Serverless pricing | Per-token | Per-second GPU |

| Dedicated endpoints |  (per-minute) (per-minute) |

DIY via reserved instances |

| GPU clusters |  (H100 $3.49/hr) (H100 $3.49/hr) |

(H100 ~$4.50/hr) (H100 ~$4.50/hr) |

| Fine-tuning |  Managed Managed |

DIY |

| Batch inference |  (50% discount, 30B tokens) (50% discount, 30B tokens) |

DIY |

| Custom Python code | Limited to specific containers |  Any code Any code |

| Custom Docker images |  (Together Code Interpreter) (Together Code Interpreter) |

|

| Inference optimizations | FlashAttention-3, ATLAS, speculative decoding | DIY (bring vLLM, TGI, etc.) |

| Closed-source LLMs |  |

DIY |

| Image generation |  |

DIY |

| Audio/video models |  |

DIY |

| Embeddings |  |

DIY |

| Scale to zero |  (serverless tier) (serverless tier) |

|

| Startup credits | Up to $50K | $30/mo |

| Best for | Teams running open-source LLMs at scale without building inference infra | Custom workloads needing full Python flexibility |

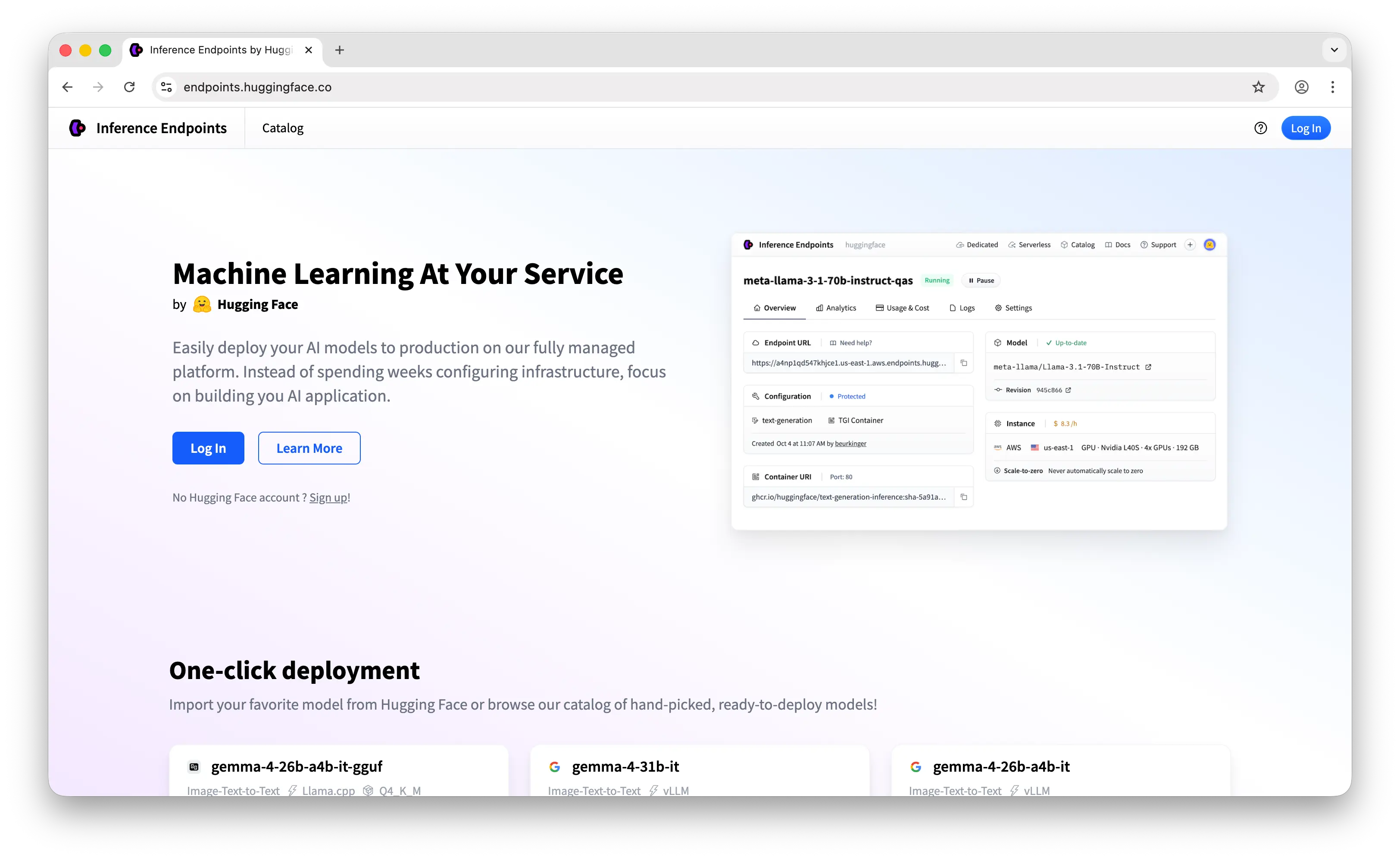

5. Hugging Face Inference Endpoints

Hugging Face Inference Endpoints is a managed serving product that runs models from the Hugging Face Hub on dedicated NVIDIA GPUs, with TGI, vLLM, or SGLang under the hood.

What Makes It Different

The headline feature is HF Hub integration. Pick a model from the 2M+ Hub catalog, click deploy, choose a GPU tier, and you have a private HTTPS endpoint in a few minutes, no Docker, no Kubernetes, no CUDA setup. HF handles the serving framework (TGI/vLLM/SGLang/TEI), model loading, health checks, and autoscaling, including scale-to-zero.

Hugging Face also offers Inference Providers, a separate serverless routing layer that gives access to hundreds of models across multiple inference partners at pay-as-you-go pricing, with monthly credits included on the free, PRO ($9/mo), and Team ($20/user/mo) plans.

Key Differences from Modal

Inference Endpoints provision a dedicated GPU per endpoint, billed by the hour while running, where Modal scales to zero with per-second billing. That makes Modal cheaper for bursty workloads and HF cheaper for consistent high-utilization production traffic, until you hit the cost cliff: an H100 endpoint on HF runs ~$6.40–8.00/hr (markup on AWS/GCP underneath), while Modal sits at ~$4.50/hr with proper preemptible pricing. Modal also gives you full Python flexibility (any code, any framework), where HF is constrained to its supported serving engines. The trade-off is setup time: Modal needs you to write the serving code, HF deploys a Hub model in clicks.

Comparison Table

| Feature | Hugging Face Inference Endpoints | Modal |

|---|---|---|

| Primary abstraction | Managed model deployment from Hub | Serverless Python functions |

| Pricing model | Per-hour dedicated GPU (billed per minute) | Per-second |

| H100 pricing | ~$6.40–8.00/hr | ~$4.50/hr |

| Free tier |  for Endpoints / for Endpoints /  for Inference Providers for Inference Providers |

$30/mo credits |

| Hub integration |  2M+ models 2M+ models |

|

| One-click deploy |  |

|

| Custom Python code | Limited (custom handlers) |  Full flexibility Full flexibility |

| Custom Docker images |  |

|

| Serving framework | TGI/vLLM/SGLang/TEI (managed) | BYO (vLLM, TGI, custom) |

| Scale to zero |  |

|

| Cold starts | 15–60s for large models | 2–4 seconds typical |

| Spot pricing |  |

(preemptible) (preemptible) |

| Per-second billing |  (per-minute) (per-minute) |

|

| Fine-tuning | Via AutoTrain | DIY |

| Inference Providers (routing) |  Separate product Separate product |

|

| Underlying cloud | AWS/GCP (markup on top) | Modal's own infrastructure |

| Best for | Teams deploying Hub models with zero setup | Teams needing full Python control and per-second economics |

Which Should You Choose?

Choose Puter.js if you're building a web app and want to add AI features without any backend or API costs. The user-pays model is ideal for developers who don't want to manage GPUs, deploy containers, or cover user costs out of pocket.

Choose RunPod if you want the cheapest serious GPU pricing, need persistent pods for long-running training, or want SSH access to actual hardware. It's the best raw-infra alternative to Modal when your team is comfortable working with Docker.

Choose OpenRouter if your workload is calling existing LLMs rather than running custom models. You skip the entire serverless-GPU layer and pay per token, with automatic provider failover.

Choose Together AI if you're running open-source LLMs at scale and want optimized inference, managed fine-tuning, and batch APIs without building the inference stack yourself.

Choose Hugging Face Inference Endpoints if you want to deploy a Hub model with one click and don't mind paying a managed-service premium. Best for teams already living in the HF ecosystem.

Stick with Modal if you need full Python flexibility, custom training pipelines, complex batch jobs, or you've built your own inference stack and just want clean serverless infrastructure to run it on. Modal's DX is hard to beat when the workload is genuinely custom.

Conclusion

The top 5 Modal alternatives are Puter.js, RunPod, OpenRouter, Together AI, and Hugging Face Inference Endpoints. Each takes a different approach to the "serverless GPU" problem, from Puter.js eliminating the developer-pays model entirely, to RunPod undercutting on raw GPU price, to OpenRouter skipping infrastructure altogether. Whichever platform you choose, the best option is the one that fits your workload, your team's expertise, and how much of the inference stack you actually want to own.

Related

- Getting Started with Puter.js

- Top 5 RunPod Alternatives (2026)

- Top 5 OpenRouter Alternatives (2026)

- Best Together AI Alternatives (2026)

- Top 5 Replicate Alternatives (2026)

- Best fal.ai Alternatives (2026)

- Top 5 AWS Lambda Alternatives (2026)

- Best AWS Bedrock Alternatives (2026)

- Top 5 Vertex AI Alternatives (2026)

- Top 5 Google AI Studio Alternatives (2026)

- Best ElevenLabs Alternatives (2026)

- User-Pays vs Traditional Model

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now