Best Together AI Alternatives (2026)

On this page

Together AI is a full-stack AI inference and training platform focused on open-source models. It provides serverless inference, dedicated GPU endpoints, GPU clusters, batch processing, and fine-tuning. Its OpenAI-compatible API makes it easy to integrate, and features like batch inference at a 50% discount and dedicated H100/H200 endpoints make it popular with ML teams.

But Together AI isn't the only option. Depending on your use case, there are alternatives that may offer broader model access, lower costs, or capabilities that Together AI doesn't support.

In this article, you'll learn about five Together AI alternatives, how they compare, and which one might be the best fit for your project.

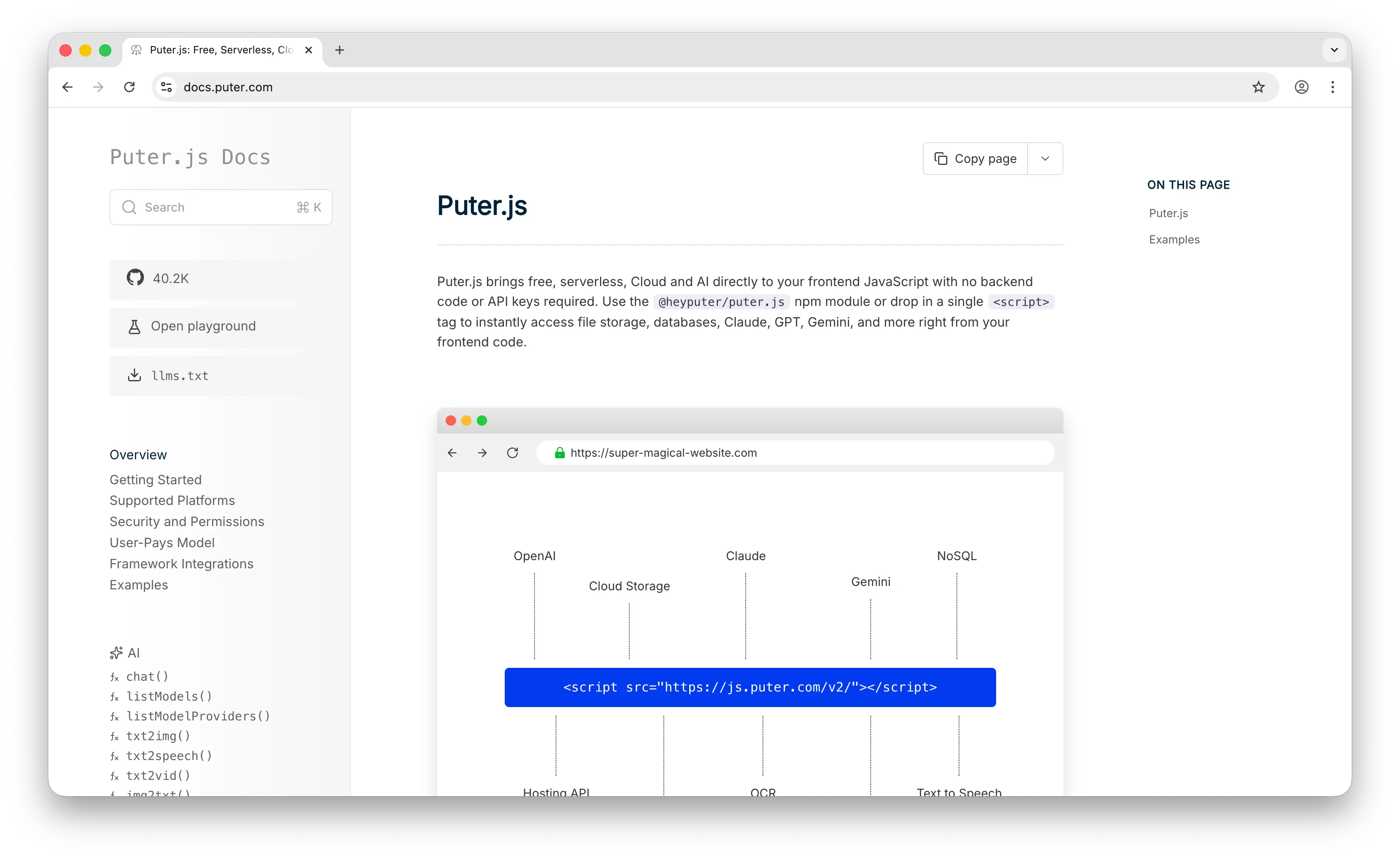

1. Puter.js

Puter.js is a JavaScript library with extensive AI model support — over 400 models from providers like OpenAI, Anthropic, Google, Meta, and others. It covers chat, image generation, video generation, text-to-speech, speech-to-text, and voice changing. It also bundles cloud storage, a key-value database, and authentication into the same package.

What Makes It Different

Puter.js supports both open-source and closed-source models — including GPT, Claude, and Gemini — through a single library. Together AI is limited to open-source models only, so if your project needs access to proprietary models alongside open-source ones, this covers both without requiring a second provider.

Beyond that, it has broader multimodal coverage than Together AI — video generation, voice changing, OCR, and image analysis — capabilities that Together AI has limited or no support for.

It also pioneered the User-Pays Model: your app users cover their own AI usage costs through their Puter account. Developers pay nothing for AI inference — no API key, no backend, no server-side setup required. This is fundamentally different from Together AI, where the developer is billed for every token of inference and every hour of GPU time.

Key Differences from Together AI

Puter.js is primarily designed for web apps running on the frontend. While it works in Node.js, the user-pays model is most natural in a browser context. It does not offer fine-tuning, batch inference, dedicated GPU endpoints, GPU clusters, or infrastructure-level control. The model catalog (400+) is curated from major providers rather than self-hosted.

Comparison Table

| Feature | Puter.js | Together AI |

|---|---|---|

| Pricing model | User-pays (free for devs) | Per-token (serverless) / per-GPU-hour (dedicated) |

| Free tier |  (free for developers) (free for developers) |

($25 credit) ($25 credit) |

| API key required | No | Yes |

| Chat/LLM models |  Extensive (380+) Extensive (380+) |

Extensive (200+) Extensive (200+) |

| Open-source models |  |

Extensive Extensive |

| Closed-source models |  (GPT, Claude, Gemini) (GPT, Claude, Gemini) |

|

| Image generation |  |

|

| Video generation |  |

Limited |

| Audio (TTS/STT) |  |

|

| Voice changing |  |

|

| Embeddings |  |

|

| Reranking models |  |

|

| Fine-tuning |  |

(full + LoRA) (full + LoRA) |

| Batch inference |  |

(50% discount) (50% discount) |

| Dedicated endpoints |  |

|

| GPU clusters |  |

(H100/H200) (H100/H200) |

| Built-in services (DB, storage, auth) |  |

|

| OpenAI-compatible API |  |

|

| Best for | Web app devs who want zero-cost AI integration | Teams needing GPU infra, fine-tuning, and batch jobs |

2. Replicate

Replicate is a pay-as-you-go platform for running AI models via API. It hosts over 50,000 models, charges developers based on compute time, and lets anyone publish models using its open-source packaging tool, Cog. It was acquired by Cloudflare in November 2025 but continues to operate as a distinct brand.

What Makes It Different

Replicate has the largest community model ecosystem in the industry. Its 50,000+ Cog-packaged models dwarf Together AI's ~200 curated open-source models. If you need a niche model — a specific fine-tuned Stable Diffusion variant, a research model, or a community-published custom pipeline — Replicate is far more likely to have it.

Replicate also supports closed-source models (GPT, Claude, Gemini) alongside open-source options, while Together AI is limited to open-source models only. Its media generation capabilities (image, video, audio) are significantly stronger than Together AI's, with excellent support for models like Flux, Kling, and Wan.

Key Differences from Together AI

Together AI offers infrastructure capabilities that Replicate lacks: dedicated GPU endpoints with guaranteed throughput, GPU clusters you can provision in minutes, and batch inference at a 50% discount. Together AI's per-token pricing is also more predictable for text workloads than Replicate's per-second compute pricing.

Together AI's API is OpenAI-compatible, making it a drop-in replacement for existing OpenAI integrations. Replicate uses a proprietary REST API, requiring more code changes to migrate.

However, Replicate's per-second compute pricing can be more cost-effective for media generation workloads where token-based pricing doesn't apply. With the Cloudflare acquisition, Replicate's models are increasingly running on Cloudflare's global edge network, potentially improving latency.

Comparison Table

| Feature | Replicate | Together AI |

|---|---|---|

| Pricing model | Per-second compute / per-output | Per-token (serverless) / per-GPU-hour (dedicated) |

| Free tier |  |

($25 credit) ($25 credit) |

| Model catalog | 50,000+ (community Cog models) | 200+ (curated open-source) |

| Open-source models |  Extensive Extensive |

Extensive Extensive |

| Closed-source models |  (GPT, Claude, Gemini) (GPT, Claude, Gemini) |

|

| Chat/LLM models |  Growing Growing |

Extensive Extensive |

| Image generation |  Excellent Excellent |

|

| Video generation |  Excellent Excellent |

Limited |

| Audio models |  |

|

| Embeddings |  |

|

| Reranking models |  |

|

| Community model publishing |  (via Cog) (via Cog) |

|

| Fine-tuning |  |

(full + LoRA) (full + LoRA) |

| Batch inference |  (async predictions) (async predictions) |

(50% discount) (50% discount) |

| Dedicated endpoints |  |

|

| GPU clusters |  |

(H100/H200) (H100/H200) |

| OpenAI-compatible API |  |

|

| Edge deployment |  (via Cloudflare) (via Cloudflare) |

|

| Best for | Media generation, community models, custom hosting | Fast LLM inference, fine-tuning, and GPU infra at scale |

3. OpenRouter

OpenRouter is a unified API gateway that provides access to 300+ models from 60+ providers through a single API key. It handles routing, fallback, and billing across providers like OpenAI, Anthropic, Google, Meta, and others.

What Makes It Different

OpenRouter takes a fundamentally different approach than Together AI. Instead of hosting and running models on its own infrastructure, OpenRouter routes your requests to the best available provider. It offers automatic fallback when providers go down, provider preferences by cost or speed, and variant suffixes (:free, :nitro, :floor) for fine-grained routing control.

OpenRouter supports both open-source and closed-source models — including GPT, Claude, Gemini, and hundreds of others — through a single API key. Together AI only supports open-source models, so if you need access to closed-source models, OpenRouter covers that gap.

OpenRouter's API is also fully OpenAI-compatible, and it adds new models faster than most competitors. Its free tier offers access to select models with rate limits, making it easy to get started without any cost.

Key Differences from Together AI

OpenRouter is not an infrastructure platform. It doesn't host models on its own GPUs, offer fine-tuning, provide batch inference, or support dedicated endpoints. It's a routing and aggregation layer. If you need to run custom workloads, fine-tune models, or guarantee GPU availability, Together AI is the better choice.

Together AI's pricing is per-token for serverless inference, while OpenRouter charges a 5.5% fee on credit purchases and passes through provider pricing at cost. For high-volume open-source model workloads, Together AI is often cheaper since it runs its own optimized infrastructure.

OpenRouter's media generation support is also limited: image generation is available, video is experimental, and audio is not supported. Together AI has broader media capabilities.

Comparison Table

| Feature | OpenRouter | Together AI |

|---|---|---|

| Pricing model | Pay-as-you-go (5.5% credit fee) | Per-token (serverless) / per-GPU-hour (dedicated) |

| Free tier |  (free models with rate limits) (free models with rate limits) |

($25 credit) ($25 credit) |

| Chat/LLM models |  Extensive (300+) Extensive (300+) |

Extensive (200+) Extensive (200+) |

| Open-source models |  |

Extensive Extensive |

| Closed-source models |  Extensive Extensive |

|

| Image generation |  |

|

| Video generation | Experimental | Limited |

| Audio models |  |

|

| Embeddings |  |

|

| Reranking models | Limited |  |

| Fine-tuning |  |

(full + LoRA) (full + LoRA) |

| Batch inference |  |

(50% discount) (50% discount) |

| Dedicated endpoints |  |

|

| GPU clusters |  |

(H100/H200) (H100/H200) |

| Fallback/routing |  (automatic) (automatic) |

|

| OpenAI-compatible API |  |

|

| Model update speed | Fast | Moderate |

| Best for | Broad model access with multi-provider routing | Fast open-source LLM inference with GPU infra |

4. Cloudflare Workers AI

Cloudflare Workers AI is a serverless AI inference platform that runs models on Cloudflare's global edge network.

What Makes It Different

Workers AI runs models at the edge, close to your users, which can significantly reduce latency for AI-powered applications. This is something Together AI doesn't offer — Together AI's inference runs on centralized GPU clusters, not a distributed edge network.

Following the Replicate acquisition (November 2025), Cloudflare is integrating Replicate's 50,000+ model catalog, dramatically expanding its offerings beyond its original curated set of open-source models. Workers AI also integrates tightly with the rest of Cloudflare's developer platform — Workers for serverless compute, R2 for object storage, Vectorize for vector search, D1 for SQL databases, and AI Gateway for monitoring, caching, and rate limiting.

Workers AI supports LoRA-based fine-tuning, batch inference, and unique features like document-to-markdown conversion for AI pipelines. Its AI Gateway also works as a proxy for external AI providers, giving you observability and control over third-party API calls.

Key Differences from Together AI

Like Together AI, Workers AI only supports open-source models. However, its pricing uses a proprietary "neurons" system rather than per-token pricing, which makes direct cost comparisons difficult. Together AI's per-token pricing is more transparent and predictable.

Together AI has stronger infrastructure control: dedicated GPU endpoints with guaranteed throughput, GPU clusters you can provision, and batch inference at a 50% discount. Workers AI is serverless — you don't manage or reserve hardware.

Together AI's model catalog is more curated for LLMs and has better embeddings and reranking support. Workers AI's strength is the growing model catalog (via Replicate integration), edge deployment, and deep platform integration with Cloudflare's ecosystem.

Comparison Table

| Feature | Cloudflare Workers AI | Together AI |

|---|---|---|

| Pricing model | Neurons (pay-as-you-go) | Per-token (serverless) / per-GPU-hour (dedicated) |

| Free tier |  |

($25 credit) ($25 credit) |

| Open-source models |  |

Extensive Extensive |

| Closed-source models |  |

|

| Chat/LLM models |  |

Extensive Extensive |

| Image generation |  |

|

| Video generation |  |

Limited |

| Audio (TTS/STT) |  |

|

| Embeddings |  |

|

| Reranking models |  |

|

| Edge deployment |  (global network) (global network) |

|

| Fine-tuning |  (LoRA) (LoRA) |

(full + LoRA) (full + LoRA) |

| Batch inference |  |

(50% discount) (50% discount) |

| Dedicated endpoints |  (serverless only) (serverless only) |

|

| GPU clusters |  |

(H100/H200) (H100/H200) |

| Observability |  (AI Gateway) (AI Gateway) |

Limited |

| Ecosystem integration | Workers, R2, Vectorize, D1 | Standalone API |

| OpenAI-compatible API |  |

|

| Model update speed | Moderate (improving with Replicate) | Moderate |

| Best for | Edge AI with full-stack Cloudflare integration | Dedicated GPU infra, fine-tuning, and batch jobs |

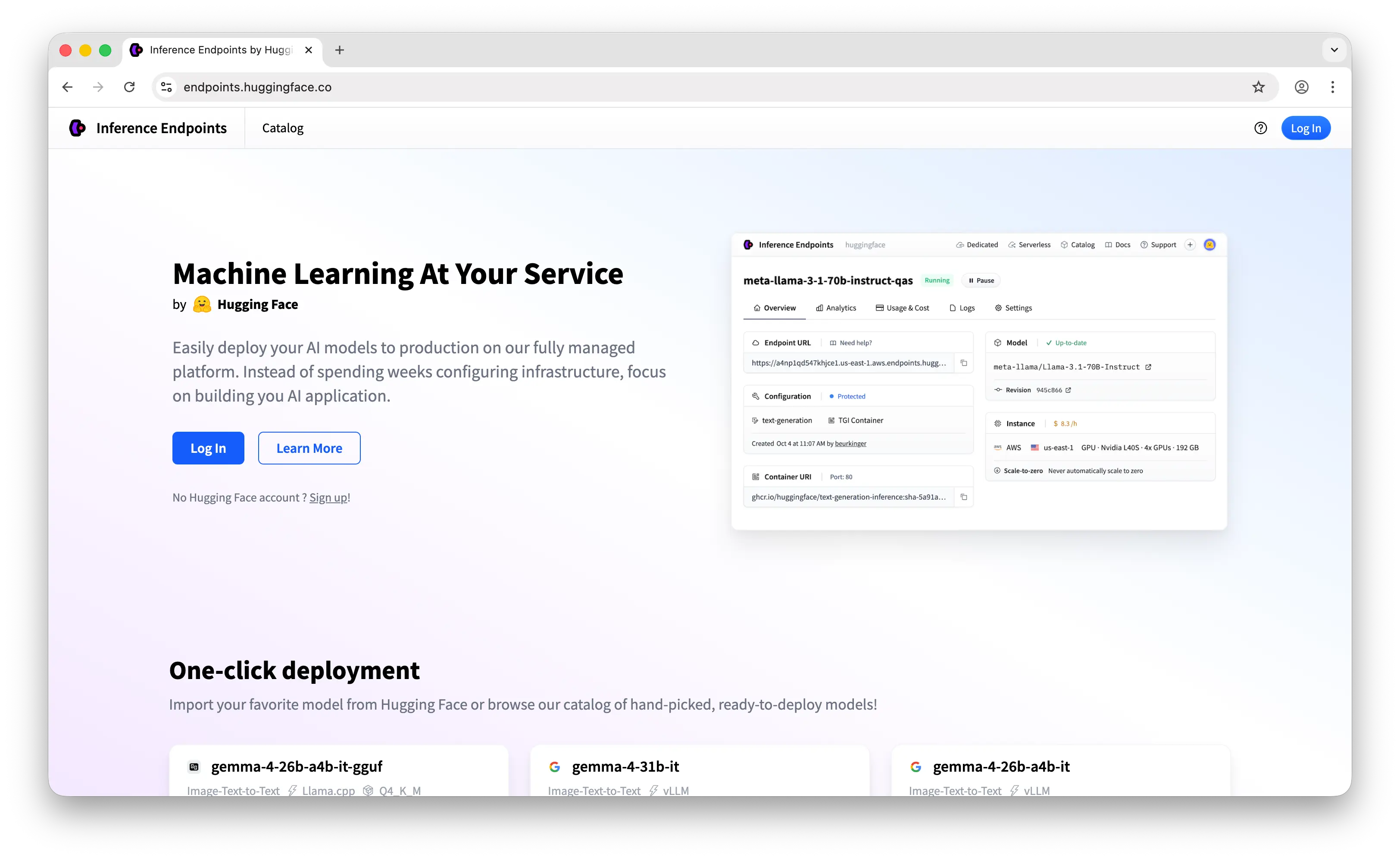

5. Hugging Face Inference Endpoints

Hugging Face Inference Endpoints is a service for deploying any model from the Hugging Face Hub on dedicated, fully managed infrastructure. You pick a model from the Hub's 2 million+ catalog, choose your GPU hardware, and get a production-ready API endpoint with autoscaling, scale-to-zero, and private networking.

What Makes It Different

Inference Endpoints gives you access to the largest model catalog available anywhere. Any of the Hub's 2 million+ models — including niche fine-tuned variants, research models, and your own private uploads — can be deployed as an endpoint. Together AI's ~200 curated models can't match this breadth, though Together AI's models are pre-optimized for fast inference.

Hugging Face offers more infrastructure flexibility: you choose your GPU hardware (from NVIDIA T4 to A100 to H100), configure autoscaling policies, enable scale-to-zero for cost savings, and set up private networking via AWS/Azure PrivateLink. Together AI offers dedicated endpoints and GPU clusters, but with less granular hardware selection.

For fine-tuning, Hugging Face offers AutoTrain (no-code fine-tuning) alongside full support for LoRA, QLoRA, and DPO through the Transformers library — a more flexible fine-tuning ecosystem than Together AI's built-in offering.

Key Differences from Together AI

Inference Endpoints charges per-minute of uptime for dedicated hardware (starting at ~$0.50/hr for GPU), not per-token. This means you pay for hardware whether or not it's processing requests, though scale-to-zero mitigates this for sporadic workloads. Together AI's per-token serverless pricing is more cost-effective for variable traffic since you only pay for actual usage.

Together AI's inference is faster for supported models, thanks to optimizations like FlashAttention. Hugging Face Inference Endpoints runs standard inference stacks (TGI, TEI, Diffusers) without the custom kernel-level optimizations that Together AI invests in.

Like Together AI, Inference Endpoints focuses on open-source/open-weight models — no closed-source models like GPT or Claude. However, its model catalog is orders of magnitude larger.

Comparison Table

| Feature | HF Inference Endpoints | Together AI |

|---|---|---|

| Pricing model | Per-minute uptime (dedicated hardware) | Per-token (serverless) / per-GPU-hour (dedicated) |

| Free tier |  (free CPU endpoints) (free CPU endpoints) |

($25 credit) ($25 credit) |

| Deployable models | 2,000,000+ (any Hub model) | 200+ (curated open-source) |

| Open-source models |  Extensive Extensive |

Extensive Extensive |

| Closed-source models |  |

|

| Chat/LLM models |  Extensive (via TGI) Extensive (via TGI) |

Extensive Extensive |

| Image generation |  (via Diffusers) (via Diffusers) |

|

| Video generation |  (via Diffusers) (via Diffusers) |

Limited |

| Audio models |  |

|

| Embeddings |  (via TEI) (via TEI) |

|

| Reranking models |  |

|

| Dedicated hardware |  (GPU selection, autoscaling) (GPU selection, autoscaling) |

|

| Scale-to-zero |  |

|

| GPU clusters |  |

(H100/H200) (H100/H200) |

| Batch inference |  |

(50% discount) (50% discount) |

| Fine-tuning |  (AutoTrain, LoRA, QLoRA, DPO) (AutoTrain, LoRA, QLoRA, DPO) |

(full + LoRA) (full + LoRA) |

| Custom containers |  (TGI, TEI, Diffusers, Docker) (TGI, TEI, Diffusers, Docker) |

|

| Private networking |  (AWS/Azure PrivateLink) (AWS/Azure PrivateLink) |

|

| Community model publishing |  (Hub uploads) (Hub uploads) |

Upload from Hugging Face |

| OpenAI-compatible API |  |

|

| Inference speed | Standard (TGI/TEI) | Fast (FlashAttention optimizations) |

| Best for | Dedicated deployment of any open-source model with full infra control | Fast serverless inference with fine-tuning and batch jobs |

Which Should You Choose?

Choose Puter.js if you're building a web app and want to add AI features without any API costs or backend setup. The user-pays model means your users cover their own usage, and it supports both open-source and closed-source models — something Together AI doesn't offer.

Choose Replicate if you need the largest community model ecosystem (50,000+ Cog models), excellent media generation (image, video, audio), or access to closed-source models. Its Cloudflare integration is also improving edge performance over time.

Choose OpenRouter if you need the broadest model catalog (300+ models, including closed-source), automatic fallback routing, and a simple unified API. It's the most straightforward option for teams that want access to many LLMs through one endpoint without managing infrastructure.

Choose Cloudflare Workers AI if you want edge-deployed inference with deep platform integration (Workers, R2, Vectorize, D1). The Replicate acquisition is dramatically expanding its model catalog, and AI Gateway provides built-in observability.

Choose Hugging Face Inference Endpoints if you need dedicated, autoscaling infrastructure for deploying any of 2 million+ open-source models with full control over hardware, private networking, and custom containers.

Stick with Together AI if you need fast open-source LLM inference backed by research-driven optimizations, dedicated GPU endpoints with guaranteed throughput, batch inference at a discount, or fine-tuning capabilities. It remains the strongest option for ML teams that want infrastructure control over open-source models without managing their own hardware.

Conclusion

The best Together AI alternatives are Puter.js, Replicate, OpenRouter, Cloudflare Workers AI, and Hugging Face Inference Endpoints. Each takes a different approach: Puter.js eliminates developer costs entirely, Replicate offers the largest community model ecosystem with strong media generation, OpenRouter provides the broadest LLM routing across providers, Cloudflare Workers AI delivers edge-deployed inference with full-stack platform integration, and Hugging Face Inference Endpoints gives you dedicated deployment with unmatched model catalog depth. The best choice depends on whether you need zero-cost integration, broad model access, edge deployment, dedicated infrastructure, or the GPU-level control that Together AI provides.

Related

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now