Top 5 OpenRouter Alternatives (2026)

On this page

Developers use OpenRouter because of its ease of use, access to 300+ models from 60+ providers through a single API key, automatic fallback when providers go down, and unified billing. But did you know there are alternatives with unique features and potentially better offerings for your use case?

In this article, you'll learn about five OpenRouter alternatives, how they compare, and which one might be the best fit for your project.

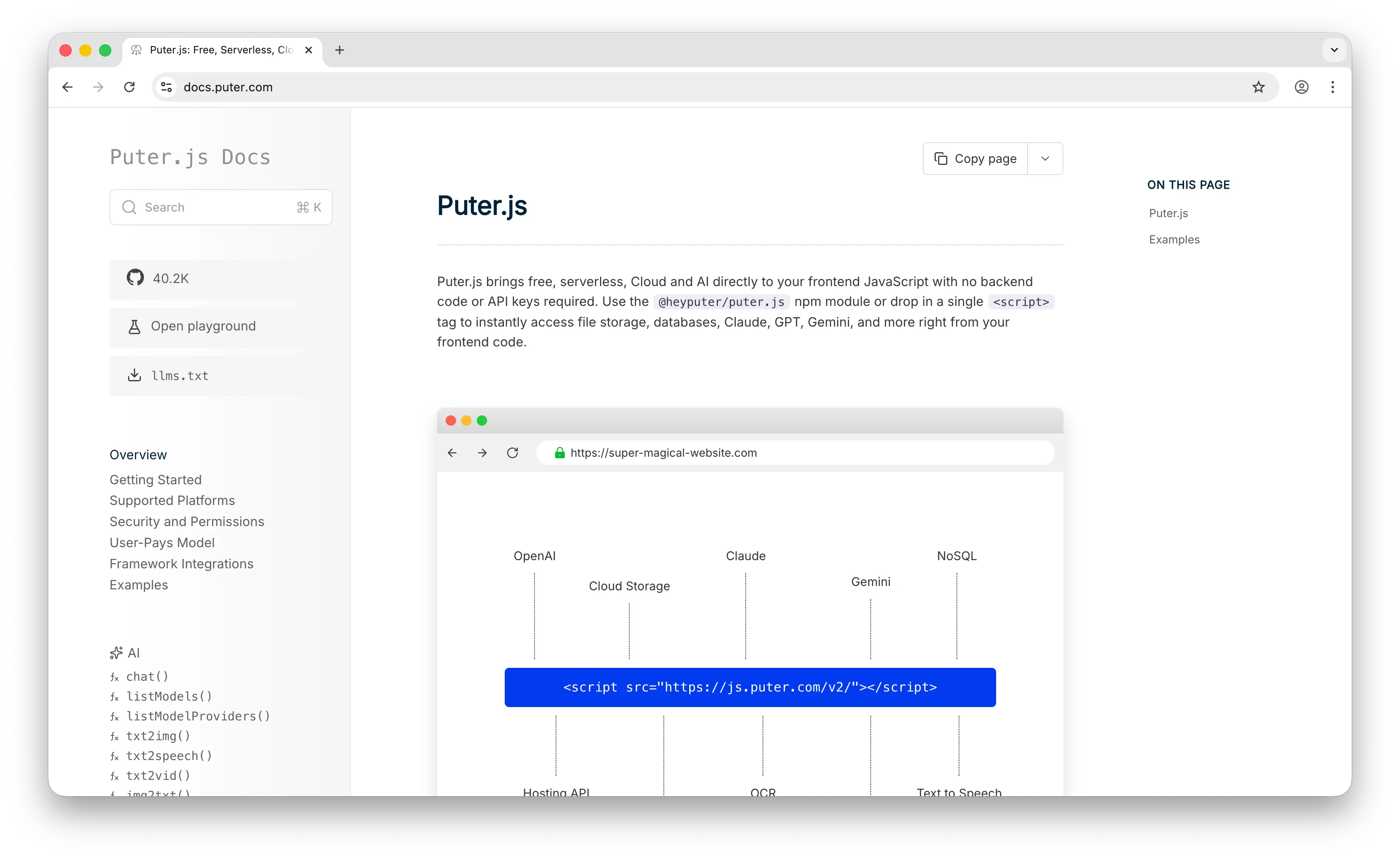

1. Puter.js

Puter.js is a JavaScript library that bundles AI, database, cloud storage, authentication, and more into a single package. It has extensive support for AI models, over 400 and growing, from providers like OpenAI, Anthropic, Google, Meta, and others.

What Makes It Different

Puter.js pioneered the User-Pays Model: your app users cover their own AI usage costs through their Puter account. This means developers can add AI features to their apps for free, no API key, no backend, no server-side setup required. This is fundamentally different from OpenRouter, where the developer is responsible for all API costs.

Puter.js also goes well beyond chat models. It supports text-to-image, image analysis, text-to-video, video analysis, OCR, speech-to-text, text-to-speech, and even voice changing, capabilities that OpenRouter largely lacks, particularly in audio and video.

Key Differences from OpenRouter

Puter.js is primarily designed for web apps running on the frontend. While it works in Node.js, the user-pays model is most natural in a browser context. It also does not currently support embeddings models, and it lacks the observability and monitoring tools that teams might need.

Comparison Table

| Feature | Puter.js | OpenRouter |

|---|---|---|

| API key required | No | Yes |

| Pricing model | User-pays (free for devs) | Pay-as-you-go (5.5% credit fee) |

| Markup | At cost | 5.5% on credit purchase |

| Chat models |  Extensive Extensive |

Extensive Extensive |

| Image generation |  |

|

| Video generation |  |

Experimental |

| Audio (TTS/STT) |  |

|

| Voice changing |  |

|

| Embeddings |  |

|

| Open-source models |  |

|

| Closed-source models |  |

|

| Model update speed | Fast | Fast |

| Fallback/routing |  |

|

| Fine-tuning |  |

|

| Observability | Limited | Usage dashboard |

| Best for | Frontend/web app devs who want zero-cost AI integration | Backend devs needing multi-provider routing |

2. Together AI

Together AI is a full-stack AI inference and training platform. Like OpenRouter, it offers access to hundreds of AI models, but its focus is squarely on open-source models and providing the infrastructure to run them at scale.

What Makes It Different

Together AI is not just an API gateway, it's an AI infrastructure platform. It offers batch inference for long-running tasks (at a 50% discount), dedicated GPU endpoints for guaranteed performance, GPU clusters for custom workloads, and fine-tuning capabilities. These are things OpenRouter simply doesn't provide.

Together AI supports a wide range of model categories: chat, image generation, audio, vision, embeddings, reranking, and code. Their research-driven optimizations (like FlashAttention) often result in faster inference than competitors.

Key Differences from OpenRouter

Together AI focuses almost exclusively on open-source models and does not offer closed-source models like GPT or Claude, unlike OpenRouter which has excellent closed-source support. It's also not as fast at adding the very latest models compared to OpenRouter. Pricing is usage-based per token for serverless inference and per minute for dedicated endpoints, with a markup that varies by model and isn't transparently documented.

Comparison Table

| Feature | Together AI | OpenRouter |

|---|---|---|

| Pricing model | Pay-as-you-go (per token + per minute for dedicated) | Pay-as-you-go (5.5% credit fee) |

| Markup | Varies, not transparently documented | 5.5% on credit purchase |

| Open-source models |  Extensive (200+) Extensive (200+) |

|

| Closed-source models |  |

Extensive Extensive |

| Batch inference |  (50% discount) (50% discount) |

(DIY) (DIY) |

| Dedicated endpoints |  |

|

| GPU clusters |  |

|

| Fine-tuning |  |

|

| Image generation |  |

|

| Video generation | Limited | Experimental |

| Audio models |  |

|

| Embeddings |  |

|

| Reranking models |  |

Limited |

| Fallback/routing |  |

|

| Model update speed | Moderate | Fast |

| Best for | Teams needing infra control, fine-tuning, and batch jobs | Devs wanting broad model access with simple routing |

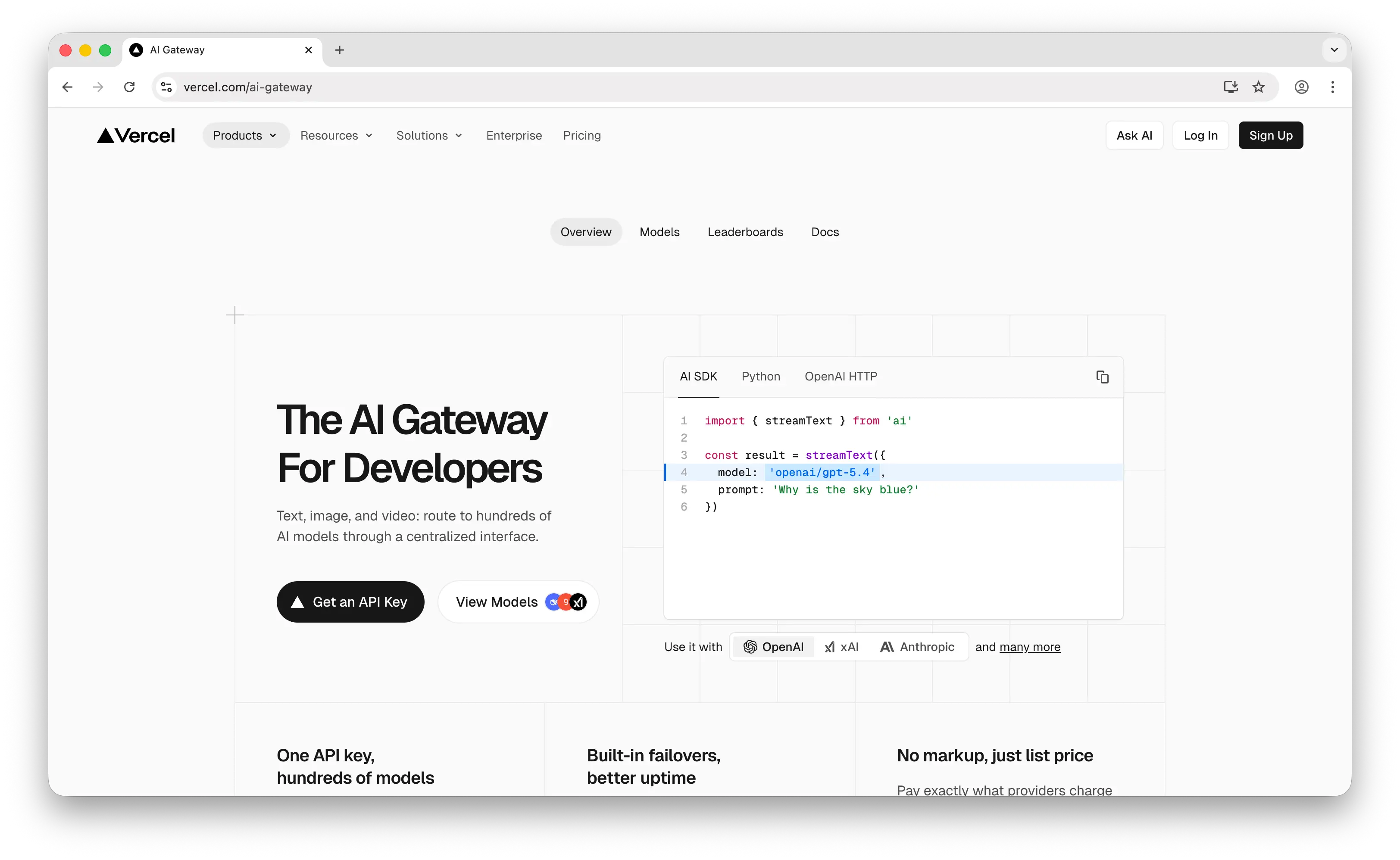

3. Vercel AI Gateway

Vercel AI Gateway is a unified API gateway by Vercel that provides access to hundreds of models from all major providers through a single endpoint.

What Makes It Different

Vercel AI Gateway integrates deeply with the Vercel ecosystem, including the AI SDK (v5/v6), Next.js, and the broader Vercel developer platform. If you're already deploying on Vercel, adoption is nearly frictionless. It supports budgeting, usage monitoring, load balancing, and automatic fallbacks out of the box.

Vercel claims zero markup on token pricing, and charges at provider list prices. They offer a free $5 monthly credit for new accounts, and support BYOK (bring your own key) with no additional fees. They also have better video generation support than OpenRouter, with dedicated image and video model categories.

Key Differences from OpenRouter

While Vercel claims no markup, some users have found that pricing for certain models can differ from OpenRouter, so it's worth comparing for your specific models. The gateway is also constrained by Vercel's serverless infrastructure: function timeouts cap at 5 minutes on Pro plans, and request bodies are limited to 4.5MB. For long-running agent workflows, this can be a real limitation. Neither Vercel AI Gateway nor OpenRouter currently offers robust audio model support.

Comparison Table

| Feature | Vercel AI Gateway | OpenRouter |

|---|---|---|

| Pricing model | Pay-as-you-go | Pay-as-you-go (5.5% credit fee) |

| Markup | Claims zero (provider list price) | 5.5% on credit purchase |

| Free tier | $5/month free credit | Free models with rate limits |

| Open-source models |  |

|

| Closed-source models |  |

|

| Image generation |  |

|

| Video generation |  |

Experimental |

| Audio models | Limited |  |

| Embeddings |  |

|

| Fallback/routing |  |

|

| Spend monitoring |  Built-in Built-in |

Usage dashboard Usage dashboard |

| BYOK support |  (zero fee) (zero fee) |

|

| SDK integration |  AI SDK, Next.js native AI SDK, Next.js native |

OpenAI-compatible |

| Fine-tuning |  |

|

| Timeout limits | 5 min max (Pro) | No platform limits |

| Model update speed | Fast | Fast |

| Best for | Teams in the Vercel/Next.js ecosystem | Teams wanting provider-agnostic access |

4. Cloudflare Workers AI

Cloudflare Workers AI is a serverless AI inference platform that runs models on Cloudflare's global edge network.

What Makes It Different

Workers AI runs models at the edge, close to your users, which can significantly reduce latency for AI-powered applications. It integrates tightly with the rest of Cloudflare's developer platform, including Workers, R2 storage, Vectorize (vector database), Durable Objects, and AI Gateway for monitoring and control.

Following the Replicate acquisition (November 2025), Cloudflare is integrating Replicate's 50,000+ model catalog, dramatically expanding its offerings. Workers AI also supports LoRA-based fine-tuning, batch inference, and unique features like document-to-markdown conversion for AI pipelines.

Key Differences from OpenRouter

Workers AI only supports open-source models, so no Claude, GPT, or other closed-source options. It uses a proprietary "neurons" pricing system (pay-as-you-go), which makes direct price comparisons with OpenRouter difficult. It's not the fastest at adding new models, though the Replicate integration should accelerate this. Workers AI does support audio (Whisper, Deepgram TTS), embeddings, and reranking models, but does not currently have video generation support.

Comparison Table

| Feature | Cloudflare Workers AI | OpenRouter |

|---|---|---|

| Pricing model | Neurons (pay-as-you-go) | Pay-as-you-go (5.5% credit fee) |

| Markup | Unclear (neurons pricing) | 5.5% on credit purchase |

| Free tier |  |

Free models with rate limits |

| Open-source models |  |

|

| Closed-source models |  |

Extensive Extensive |

| Edge deployment |  (global network) (global network) |

|

| Image generation |  |

|

| Video generation |  |

Experimental |

| Audio (TTS/STT) |  |

|

| Embeddings |  |

|

| Reranking |  |

Limited |

| Fine-tuning (LoRA) |  |

|

| Batch inference |  |

|

| Fallback/routing |  (via AI Gateway) (via AI Gateway) |

|

| Observability |  (AI Gateway) (AI Gateway) |

Usage dashboard |

| Ecosystem integration | Workers, R2, Vectorize, D1 | Standalone API |

| Model update speed | Moderate (improving with Replicate) | Fast |

| Best for | Teams on Cloudflare wanting edge AI with full-stack integration | Teams wanting broad model access without infra lock-in |

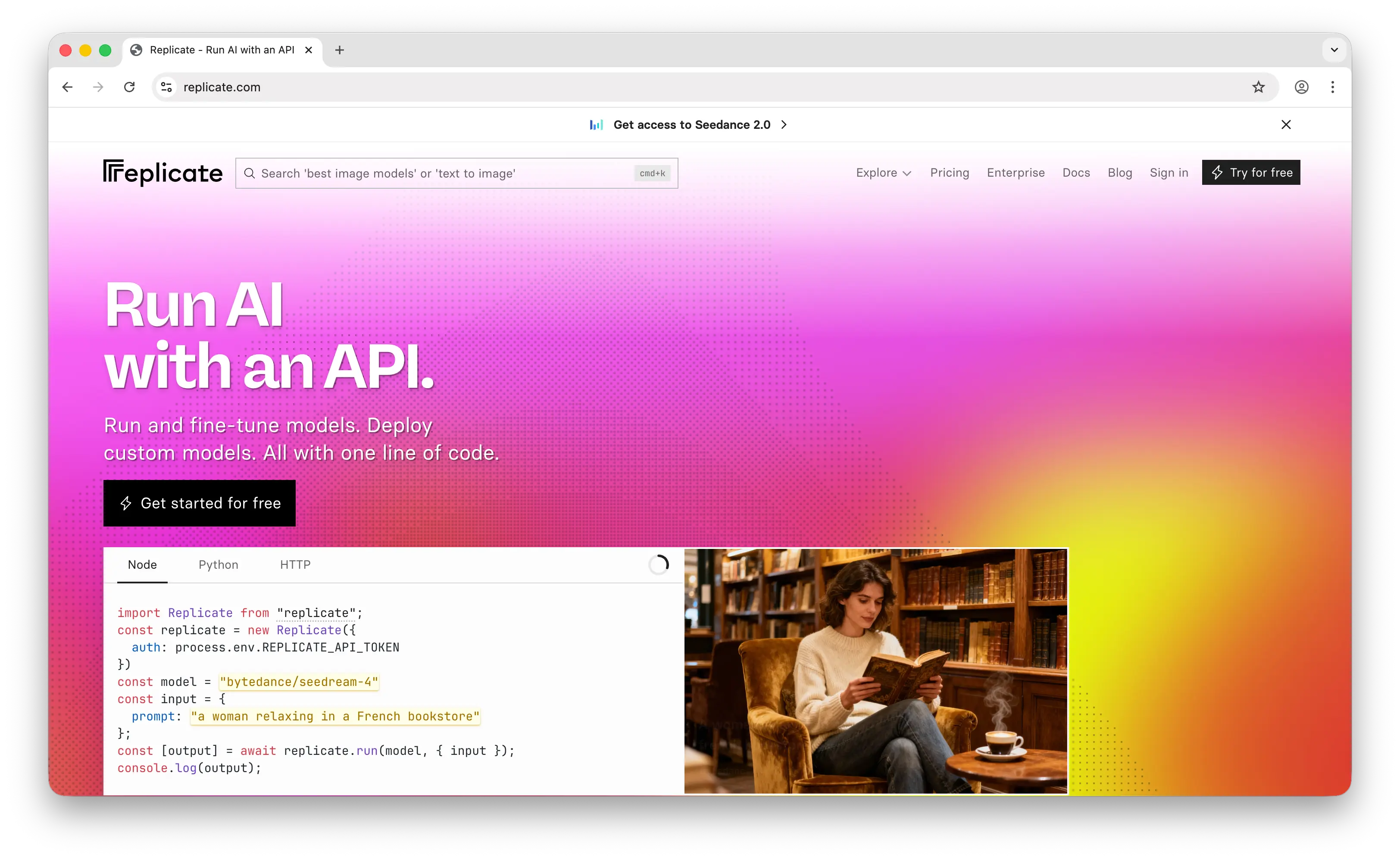

5. Replicate

Replicate is a pay-as-you-go platform for running AI models that grew in popularity alongside the Stable Diffusion wave. It was acquired by Cloudflare in November 2025 but continues to operate as a distinct brand.

What Makes It Different

Replicate has one of the largest model catalogs in the industry, with over 50,000 production-ready models, including models from individual developers and the community, not just big labs. It has excellent media generation support (image, video, audio) with deep customizability, more so than OpenRouter. Models are containerized using Cog, Replicate's open-source packaging format, making it easy for anyone to publish and share models.

Replicate's pricing is based on compute time, where you're charged by how long a model takes to run on GPU hardware, rather than by tokens. This can be more cost-effective for media generation workloads where token-based pricing doesn't apply.

Key Differences from OpenRouter

Replicate has added chat/LLM models, but its LLM catalog is not as extensive or up-to-date as OpenRouter's. It's fundamentally designed around running models on infrastructure, not routing to providers. There's no automatic fallback between providers like OpenRouter offers. With the Cloudflare acquisition, Replicate's models will increasingly run on Cloudflare's global network, potentially improving performance and integration.

Comparison Table

| Feature | Replicate | OpenRouter |

|---|---|---|

| Pricing model | Per-second compute time | Pay-as-you-go (5.5% credit fee) |

| Markup | Included in compute pricing | 5.5% on credit purchase |

| Chat/LLM models |  (growing, not as extensive) (growing, not as extensive) |

Extensive Extensive |

| Image generation |  Excellent Excellent |

|

| Video generation |  Excellent Excellent |

Experimental |

| Audio models |  |

|

| Custom/community models |  50,000+ 50,000+ |

|

| Open-source models |  |

|

| Closed-source models | Some (via API partnerships) |  Extensive Extensive |

| Fine-tuning |  |

|

| Model publishing (by anyone) |  (via Cog) (via Cog) |

|

| Fallback/routing |  |

|

| Ecosystem integration | Cloudflare (Workers, R2, etc.) | Standalone API |

| Model update speed | Moderate for LLMs, fast for media | Fast |

| Best for | Media generation, community models, custom model hosting | Broad LLM access with multi-provider routing |

Which Should You Choose?

Choose Puter.js if you're building a web app and want to add AI features without any backend or API costs. The user-pays model is ideal for developers who don't want to worry about covering user costs.

Choose Together AI if you need to fine-tune open-source models, run batch workloads, or need dedicated GPU infrastructure. It's the most powerful option for ML-heavy teams, but requires more technical expertise.

Choose Vercel AI Gateway if you're already building with Next.js and the Vercel platform. The integration is seamless, and the zero-markup pricing makes it cost-competitive.

Choose Cloudflare Workers AI if you want edge-deployed inference with deep platform integration (storage, databases, serverless compute). The Replicate acquisition is making this increasingly compelling.

Choose Replicate if your focus is media generation (images, video, audio) or you need access to community-published models. Its compute-time pricing model works well for GPU-intensive workloads.

Stick with OpenRouter if you need the broadest model catalog (including closed-source), automatic fallback routing, and a simple unified API without ecosystem lock-in. It remains the most straightforward option for teams that just want to access many LLMs through one endpoint.

Conclusion

The top 5 OpenRouter alternatives are Puter.js, Together AI, Vercel AI Gateway, Cloudflare Workers AI, and Replicate. Each takes a different approach to making AI models accessible, from Puter.js's zero-cost frontend integration to Together AI's GPU infrastructure to Cloudflare's edge network. Whichever platform you choose, the best option is the one that fits your stack, your budget, and how your users will interact with AI in your app.

Related

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now