Top 5 Vertex AI Alternatives (2026)

On this page

Vertex AI is Google Cloud's unified ML and AI platform. It gives teams access to Gemini models, third-party models through Model Garden, and a full ML lifecycle: data labeling, AutoML, custom training, pipelines, model monitoring, and enterprise-grade compliance. For organizations already invested in Google Cloud, it's a natural fit.

But that power comes with real friction — a GCP project, billing configuration, IAM setup, and a pricing model with no hard spending caps. If you don't need the full ML platform and just want to call models through an API, most of Vertex AI's weight becomes overhead.

In this article, you'll learn about five Vertex AI alternatives, how they compare, and which one might be the best fit for your project.

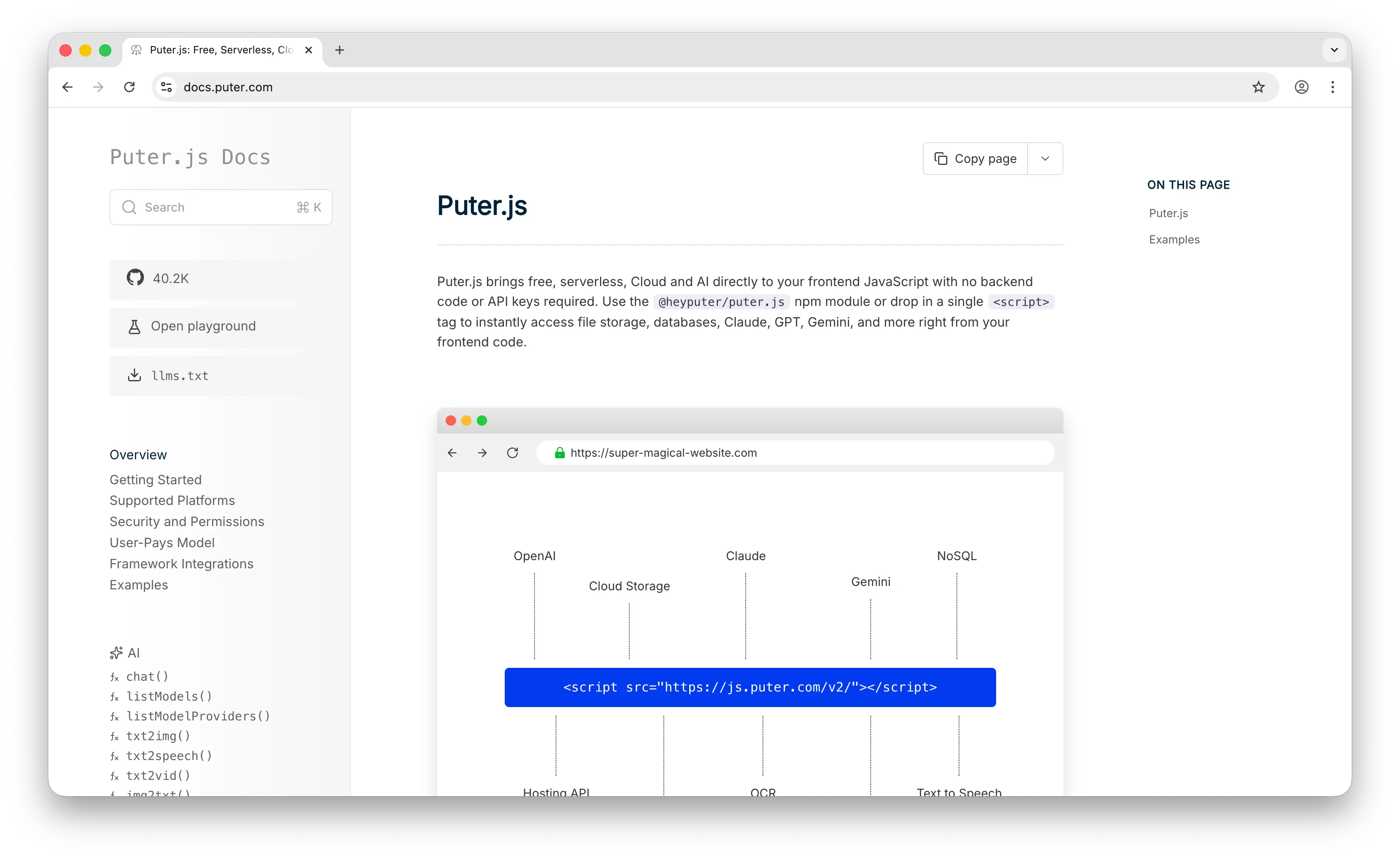

1. Puter.js

Puter.js is a JavaScript library that combines AI, database, cloud storage, authentication, and more into one package. Through a single script tag or npm install, it gives frontend developers access to over 400 AI models from providers like OpenAI, Anthropic, Google, Meta, and others — with no backend required.

What Makes It Different

Puter.js takes a fundamentally different approach to AI infrastructure costs through its User-Pays Model. Instead of developers paying for API usage and managing billing, each app user covers their own AI costs through their Puter account. The developer pays nothing — no API key, no server, no billing dashboard.

Compare that with Vertex AI, where you need a GCP project, a billing account, service account credentials, and endpoint configuration before making a single call. Puter.js collapses all of that into one line of JavaScript:

<script src="https://js.puter.com/v2/"></script>

<script>

puter.ai.chat("What is life?", { model: "gpt-5.4-nano" }).then(puter.print);

</script>

Beyond chat models, Puter.js supports text-to-image, image analysis, text-to-video, video analysis, OCR, speech-to-text, text-to-speech, and voice changing. Vertex AI covers many of these modalities as well (Imagen for images, Veo for video, Chirp for speech), but Puter.js is unique in offering voice changing capabilities and in making all of these accessible without any infrastructure setup.

Key Differences from Vertex AI

Puter.js is built for frontend web apps, not ML teams. It has no MLOps tooling, no custom model training, no fine-tuning, no dedicated GPU endpoints, no enterprise compliance features, and no embedding models. If you need Vertex AI's full ML lifecycle — AutoML, pipelines, model monitoring, data labeling — Puter.js is not a replacement. It solves a different problem entirely: giving frontend developers instant AI access at zero cost.

Comparison Table

| Feature | Puter.js | Vertex AI |

|---|---|---|

| Setup complexity | None (single script tag) | High (GCP project, billing, IAM) |

| API key required | No | Yes (service account) |

| Pricing model | User-pays (free for devs) | Pay-as-you-go (per token + compute) |

| Markup | At cost | Google Cloud rates (Gemini 2.5 Pro: $1.25/$10 per MTok) |

| Chat/LLM models |  400+ models 400+ models |

Gemini + Model Garden Gemini + Model Garden |

| Image generation |  |

Imagen Imagen |

| Video generation |  |

Veo Veo |

| Audio (TTS/STT) |  |

Chirp, Lyria Chirp, Lyria |

| Voice changing |  |

|

| Embeddings |  |

|

| Fine-tuning |  |

|

| Custom model training |  |

AutoML + custom AutoML + custom |

| MLOps / Pipelines |  |

|

| Observability | Limited |  Cloud Monitoring Cloud Monitoring |

| Model update speed | Fast | Fast (Gemini); moderate (Model Garden) |

| Best for | Frontend/web app devs who want zero-cost AI integration | Enterprise teams needing full ML lifecycle on GCP |

2. Google AI Studio

Google AI Studio is Google's free, browser-based development environment for building with Gemini models. It provides access to the same Gemini model family as Vertex AI — but through the Gemini Developer API, which has its own pricing tiers, rate limits, and a much simpler onboarding path.

What Makes It Different

Google AI Studio is completely free as an interface — no subscriptions, no seat fees. The Gemini Developer API underneath has a generous free tier for most models, and a pay-as-you-go paid tier. This is a sharp contrast to Vertex AI, which requires a Google Cloud project with billing enabled from the start.

A major addition in March 2026 was Project Spend Caps, which let developers set monthly dollar limits per project — a safeguard that Vertex AI still does not offer as a hard cap. AI Studio also supports prompt engineering tools, structured output validation, batch processing (at 50% discount), and direct code export. For developers and small teams, AI Studio delivers most of the Gemini model access they need at a fraction of Vertex AI's complexity.

There is an important data caveat: on the free tier, Google may use your data to improve their products. The paid tier excludes your data from training.

Key Differences from Vertex AI

AI Studio provides developer-tier access to Gemini, not the enterprise ML platform. Many developers do use the Gemini Developer API in production — it's not just a prototyping tool. But it does not offer Vertex AI's SLAs, compliance certifications (HIPAA, SOC 2), provisioned throughput, VPC Service Controls, custom model training, AutoML, model monitoring, or Agent Engine. It's also limited to Gemini models; Vertex AI's Model Garden gives access to Claude, Llama, Mistral, and others. Note that Vertex AI does offer an Express Mode for limited usage without enabling billing, which partially bridges this gap for experimentation.

Comparison Table

| Feature | Google AI Studio | Vertex AI |

|---|---|---|

| Setup complexity | Google account only | GCP project + billing + IAM |

| Pricing model | Free tier + pay-as-you-go | Pay-as-you-go (per token + compute) |

| Free tier |  Generous (most models) Generous (most models) |

$300 credit (90 days); Express Mode |

| Spend caps |  Project-level Project-level |

No hard caps No hard caps |

| Gemini models |  Full access Full access |

Full access Full access |

| Third-party models |  Gemini only Gemini only |

Model Garden (Claude, Llama, etc.) Model Garden (Claude, Llama, etc.) |

| Image generation |  Imagen 4 Imagen 4 |

Imagen 4 Imagen 4 |

| Video generation |  Veo 3.1 Veo 3.1 |

Veo 3.1 Veo 3.1 |

| Audio generation |  Lyria Lyria |

Lyria Lyria |

| Batch processing |  50% discount 50% discount |

|

| Grounding with Search |  |

|

| Fine-tuning |  Limited Limited |

Full Full |

| Custom model training |  |

|

| MLOps / Pipelines |  |

|

| Observability |  Usage dashboards Usage dashboards |

Cloud Monitoring Cloud Monitoring |

| Enterprise SLAs |  |

|

| Agent tooling |  |

Agent Engine, Agent Builder Agent Engine, Agent Builder |

| Model update speed | Fast | Fast (Gemini); moderate (Model Garden) |

| Best for | Developers wanting fast, low-cost Gemini access | Enterprise teams needing SLAs, compliance, and full ML tooling |

3. OpenAI Platform

The OpenAI Platform is OpenAI's developer API and tooling suite, providing access to the GPT-5.4 model family, reasoning models, image generation, video generation, speech-to-speech, and a growing suite of agent-building tools.

What Makes It Different

The OpenAI Platform is a model-first developer platform with an increasingly agentic focus. It offers the Responses API (their unified interface for agent workflows), Agent Builder (visual agent design), the Agents SDK (code-first), Realtime API for voice agents, and built-in tools like web search, code interpreter, and file search. These are purpose-built primitives for building autonomous AI applications — a layer of abstraction that Vertex AI handles differently through its Agent Engine and ADK.

OpenAI's model lineup is one of the broadest from a single provider: GPT-5.4 for complex reasoning ($2.50/$15 per MTok), GPT-5.4-mini for balanced workloads ($0.75/$4.50), GPT-5.4-nano for high-volume tasks ($0.20/$1.25), the Codex line for agentic coding (GPT-5.3-Codex, GPT-5.2-Codex), and the o-series reasoning models for tasks requiring deeper deliberation. The Batch API offers 50% off for asynchronous processing, and prompt caching provides roughly 90% off on repeated input tokens.

OpenAI also provides fine-tuning (including reinforcement fine-tuning), model distillation, image generation (GPT Image), video generation (Sora 2, though deprecation is scheduled for September 2026), and open-weight models (GPT-OSS) under Apache 2.0 for self-hosting.

Key Differences from Vertex AI

The OpenAI Platform is single-provider — you only get OpenAI's own models. Vertex AI's Model Garden gives access to models from Anthropic, Meta, Mistral, and others alongside Gemini. OpenAI also doesn't offer the full ML lifecycle: no AutoML, no custom model training from scratch, no data labeling, no model monitoring dashboards, and no integrated data/storage services. Vertex AI is a cloud ML platform; the OpenAI Platform is an AI model API with developer tooling.

Comparison Table

| Feature | OpenAI Platform | Vertex AI |

|---|---|---|

| Setup complexity | Account + API key | GCP project + billing + IAM |

| Pricing model | Pay-as-you-go (per token) | Pay-as-you-go (per token + compute) |

| Free tier | Limited free credits | $300 credit (90 days); Express Mode |

| Chat/LLM models |  GPT-5.4 family, Codex, o-series GPT-5.4 family, Codex, o-series |

Gemini + Model Garden Gemini + Model Garden |

| Third-party models |  OpenAI only OpenAI only |

Claude, Llama, Mistral, etc. Claude, Llama, Mistral, etc. |

| Image generation |  GPT Image GPT Image |

Imagen Imagen |

| Video generation |  Sora 2 (sunsetting Sep 2026) Sora 2 (sunsetting Sep 2026) |

Veo Veo |

| Audio / Voice |  Realtime API, TTS Realtime API, TTS |

Chirp, Lyria Chirp, Lyria |

| Embeddings |  |

|

| Batch processing |  50% discount 50% discount |

|

| Prompt caching |  ~90% off ~90% off |

|

| Fine-tuning |  Including reinforcement Including reinforcement |

|

| Agent tooling |  Responses API, Agents SDK, Agent Builder Responses API, Agents SDK, Agent Builder |

Agent Engine, ADK Agent Engine, ADK |

| Open-weight models |  GPT-OSS (Apache 2.0) GPT-OSS (Apache 2.0) |

Model Garden (Llama, Gemma, etc.) Model Garden (Llama, Gemma, etc.) |

| Custom model training |  |

AutoML + custom AutoML + custom |

| MLOps / Pipelines |  |

|

| Observability | Evals framework, usage dashboards |  Cloud Monitoring Cloud Monitoring |

| Data residency |  EU available (10% uplift) EU available (10% uplift) |

Multi-region Multi-region |

| Model update speed | Fast | Fast (Gemini); moderate (Model Garden) |

| Best for | Developers building AI-first apps with OpenAI models | Enterprise teams needing multi-model access + GCP integration |

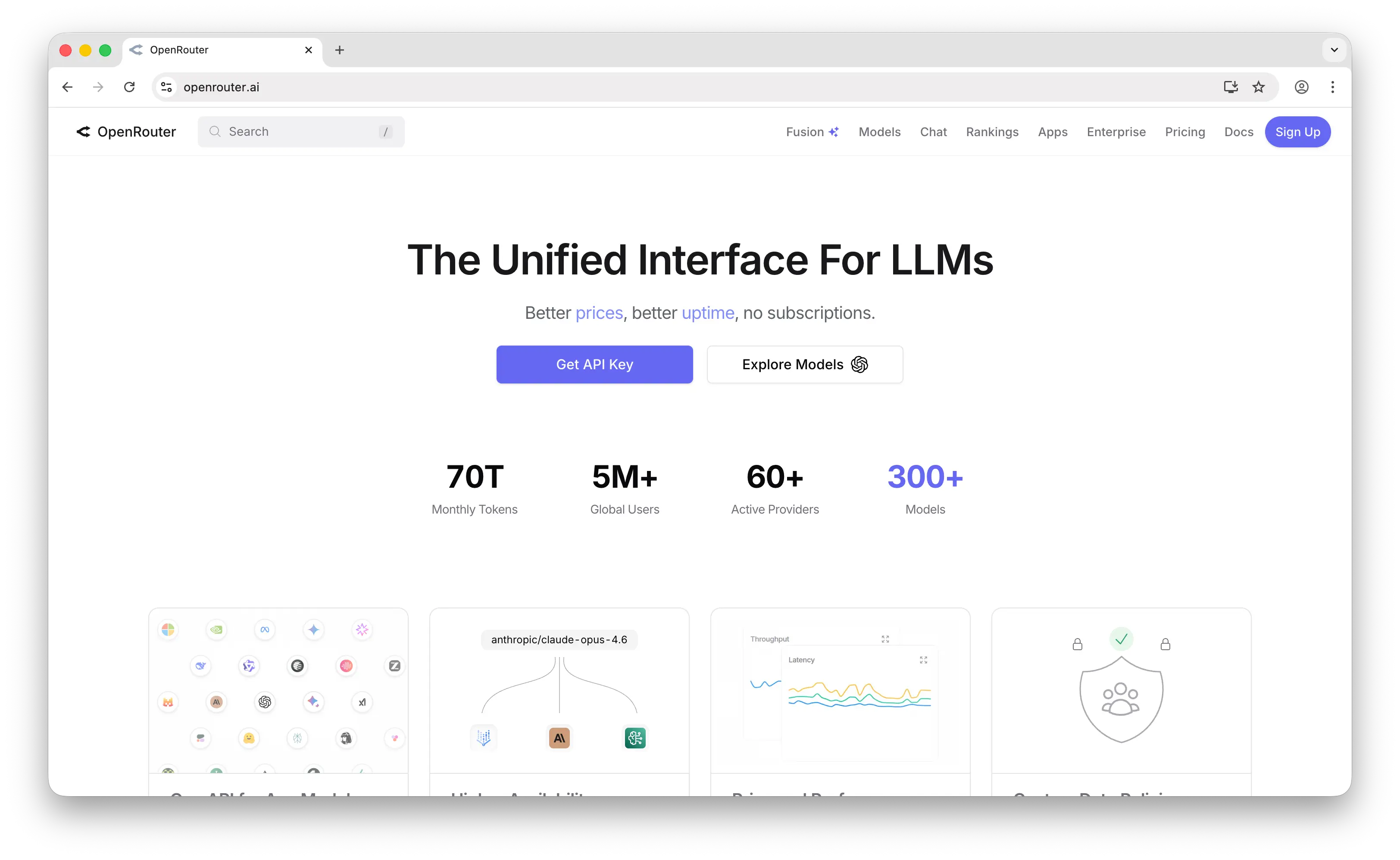

4. OpenRouter

OpenRouter is a unified API gateway that provides access to 300+ models from 60+ providers through a single API key, with automatic fallback routing when providers go down.

What Makes It Different

OpenRouter is the multi-provider model router. Where Vertex AI's Model Garden gives you access to third-party models within the Google Cloud ecosystem (with GCP billing, IAM, and data governance), OpenRouter gives you access to models from every major provider — OpenAI, Anthropic, Google, Meta, Mistral, DeepSeek, and many more — through a single, OpenAI-compatible API endpoint. Change one parameter to switch models. No cloud accounts, no IAM setup, no vendor lock-in.

OpenRouter passes through provider pricing with no markup on inference. The platform charges a 5.5% fee ($0.80 minimum) when you purchase credits. It also supports BYOK (bring your own key) with the first 1M requests/month free and a 5% usage fee after that. Automatic fallback routing means if one provider goes down, your request is transparently routed to another, and you're only billed for the successful run.

OpenRouter also offers dynamic variants that give developers fine-grained control: :nitro for throughput-optimized routing, :floor for cost-optimized routing, :exacto for tool-calling reliability, and :free for free-tier models with rate limits. Vertex AI recently introduced Model Optimizer, which automatically routes Gemini queries to the best-fit model (Flash, Pro, etc.) based on a cost/quality preference — a similar concept, but limited to Gemini models only.

Key Differences from Vertex AI

OpenRouter is purely an API gateway — it does not train, fine-tune, or host models. There's no custom model training, no MLOps, no data labeling, no model monitoring, and no cloud infrastructure integration. It also lacks Vertex AI's enterprise compliance features out of the box, though Enterprise plans offer SLAs, SSO, and regional routing. If you need the full ML platform, OpenRouter won't replace Vertex AI. But if you primarily use Vertex AI to call Gemini and third-party models via API, OpenRouter offers a dramatically simpler and more flexible alternative with far broader model coverage.

Comparison Table

| Feature | OpenRouter | Vertex AI |

|---|---|---|

| Setup complexity | API key only | GCP project + billing + IAM |

| Pricing model | Pay-as-you-go (5.5% fee on credit purchase) | Pay-as-you-go (per token + compute) |

| Markup | No markup on inference (5.5% on credit purchase) | Google Cloud rates |

| Free tier |  25+ free models 25+ free models |

$300 credit (90 days); Express Mode |

| Model catalog |  300+ from 60+ providers 300+ from 60+ providers |

Gemini + Model Garden Gemini + Model Garden |

| OpenAI models |  |

|

| Anthropic models |  |

Model Garden Model Garden |

| Google models |  |

Native Native |

| Open-source models |  Extensive Extensive |

Model Garden Model Garden |

| Fallback / routing |  Multi-provider automatic Multi-provider automatic |

Limited (Model Optimizer, Gemini only) |

| BYOK support |  |

N/A (direct provider) |

| Image generation |  |

Imagen Imagen |

| Video generation | Experimental |  Veo Veo |

| Audio models |  |

Chirp, Lyria Chirp, Lyria |

| Embeddings |  |

|

| Fine-tuning |  |

|

| Custom model training |  |

|

| MLOps / Pipelines |  |

|

| Observability | Usage dashboard, per-key tracking |  Cloud Monitoring Cloud Monitoring |

| Enterprise SLAs | Enterprise plan (contact sales) |  |

| Data residency |  Regional routing (Enterprise) Regional routing (Enterprise) |

Multi-region Multi-region |

| SDK compatibility | OpenAI-compatible | Google Cloud SDKs |

| Model update speed | Fast | Fast (Gemini); moderate (Model Garden) |

| Best for | Developers wanting multi-provider access without cloud lock-in | Enterprise teams needing GCP-integrated ML platform |

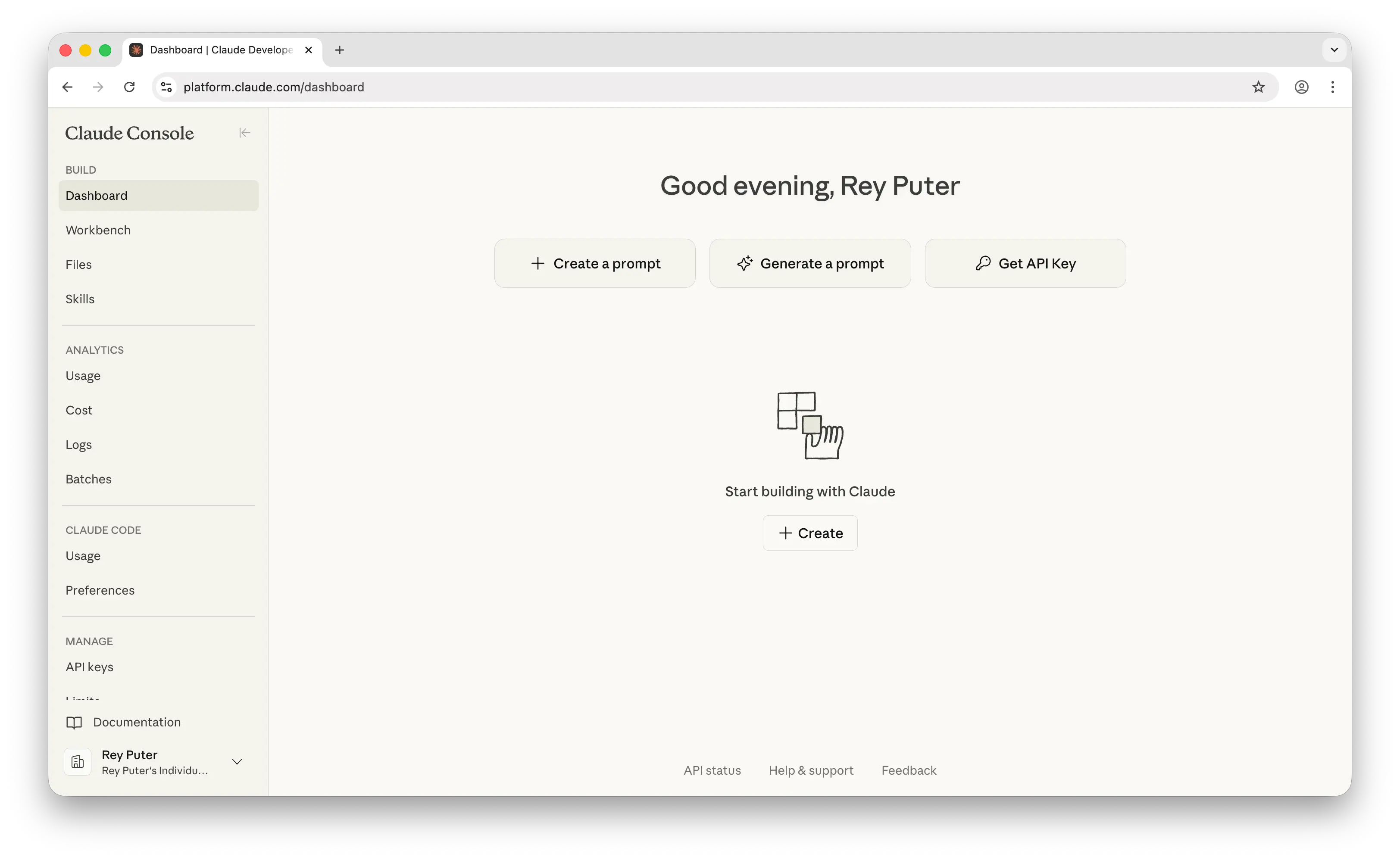

5. Anthropic Console

The Anthropic Console is Anthropic's developer platform for building with Claude models. It provides API access, a prompt engineering Workbench, evaluation tools, and a growing suite of production features including managed agents and a CLI.

What Makes It Different

The Anthropic Console is built around Claude's core capabilities: a 1M token context window (GA on Opus 4.6 and Sonnet 4.6 at standard pricing with no surcharge), extended thinking for step-by-step reasoning, and computer use capabilities. The recently launched Claude Managed Agents (public beta, April 2026) provides a fully managed agent harness with secure sandboxing, built-in tools, and server-sent event streaming — directly competitive with Vertex AI's Agent Engine.

Anthropic's pricing has become significantly more competitive: Opus 4.6 at $5/$25 per million tokens represents a 67% reduction from the Opus 4.1 era ($15/$75). Prompt caching offers 90% off on cache hits — a deeper discount than most competitors, including OpenAI's ~50% cached input reduction — and the Batch API provides 50% off for asynchronous workloads.

The Workbench is a standout developer tool: it offers prompt generation, prompt improvement, test suite creation, side-by-side output comparison, evaluation grading, and direct code export. The Console also recently added shareable prompts for team collaboration, the ant CLI for terminal-based API interaction, a Usage & Cost Admin API for programmatic spend tracking, and MCP (Model Context Protocol) server support for tool integrations.

Key Differences from Vertex AI

The Anthropic Console is a single-model-family platform — you only get Claude models (Opus, Sonnet, Haiku). If you need Gemini, GPT, or open-source models, you'll need another provider. It also lacks Vertex AI's ML lifecycle tooling: no custom model training, no AutoML, no data labeling, no pipeline orchestration, and no integrated data services. Worth noting: Vertex AI also supports MCP through its Agent Development Kit (ADK) and provides Google-managed MCP servers, so MCP support alone isn't a reason to choose one over the other. However, for teams building Claude-centric applications that value prompt engineering tooling and deep context windows, the Anthropic Console offers a more focused experience than navigating Vertex AI's Model Garden for Claude access.

Comparison Table

| Feature | Anthropic Console | Vertex AI |

|---|---|---|

| Setup complexity | Account + API key | GCP project + billing + IAM |

| Pricing model | Pay-as-you-go (per token) | Pay-as-you-go (per token + compute) |

| Free tier | Small free credits | $300 credit (90 days); Express Mode |

| Claude models |  Full (Opus 4.6, Sonnet 4.6, Haiku 4.5) Full (Opus 4.6, Sonnet 4.6, Haiku 4.5) |

Model Garden (limited selection) Model Garden (limited selection) |

| Gemini models |  |

Native Native |

| Context window | 1M tokens (standard pricing) | 1M tokens (Gemini); varies by model |

| Extended thinking |  |

(Gemini thinking models) (Gemini thinking models) |

| Batch processing |  50% discount 50% discount |

|

| Prompt caching |  90% off cache hits 90% off cache hits |

|

| Prompt engineering tools |  Workbench (generate, evaluate, improve) Workbench (generate, evaluate, improve) |

Vertex AI Studio Vertex AI Studio |

| Agent tooling |  Managed Agents, Claude Code Managed Agents, Claude Code |

Agent Engine, ADK Agent Engine, ADK |

| Web search (API) |  |

Grounding with Search Grounding with Search |

| Code execution (API) |  Sandboxed Sandboxed |

|

| Computer use |  |

|

| MCP support |  Native Native |

Via ADK + managed servers Via ADK + managed servers |

| Fine-tuning |  |

|

| Custom model training |  |

|

| MLOps / Pipelines |  |

|

| Observability | Usage & Cost API, console dashboards |  Cloud Monitoring Cloud Monitoring |

| Enterprise SLAs |  Enterprise plan Enterprise plan |

|

| Data residency |  |

|

| Model update speed | Fast | Fast (Gemini); moderate (Model Garden) |

| Best for | Teams building Claude-centric apps with strong prompt tooling | Enterprise teams needing multi-model access + full ML platform |

Which Should You Choose?

Choose Puter.js if you're building a web app and want to add AI features without any backend, API keys, or infrastructure costs. The user-pays model is ideal for indie developers and small teams who don't want to manage billing, and the 400+ model catalog means you're not locked into any single provider.

Choose Google AI Studio if you want access to Gemini models with minimal setup and strong cost controls. It's the easiest on-ramp to Google's AI, with a generous free tier, project-level spend caps, and batch processing discounts. Best for developers and small teams who don't need Vertex AI's enterprise features.

Choose the OpenAI Platform if you're building AI-first applications around OpenAI's model ecosystem. The GPT-5.4 family, Codex models for agentic coding, agent-building tools (Responses API, Agents SDK), and Realtime API for voice make it the most complete single-provider developer platform.

Choose OpenRouter if you need access to models from every major provider through a single API without cloud lock-in. Automatic fallback routing, no markup on inference pricing, OpenAI SDK compatibility, and dynamic routing variants make it the most flexible option for teams that want to compare, switch, or combine models.

Choose the Anthropic Console if Claude is your primary model and you value prompt engineering tooling, a 1M token context window at standard pricing, deep prompt caching discounts, and agentic capabilities like Managed Agents and Claude Code.

Stick with Vertex AI if you need the full Google Cloud ML lifecycle — custom model training, AutoML, ML pipelines, model monitoring, data labeling, and enterprise compliance — integrated with GCP services like BigQuery, Cloud Storage, and VPC.

Conclusion

The best Vertex AI alternatives are Puter.js, Google AI Studio, the OpenAI Platform, OpenRouter, and the Anthropic Console. Each serves a different need: Puter.js eliminates infrastructure entirely for frontend developers, Google AI Studio offers the simplest path to Gemini, the OpenAI Platform provides the deepest single-provider tooling, OpenRouter gives unmatched multi-provider flexibility, and the Anthropic Console delivers focused Claude development tools. The right choice depends on whether you need a full ML platform, a simple model API, or something in between.

Related

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now