Best AWS Bedrock Alternatives (2026)

On this page

AWS Bedrock is Amazon's fully managed service for accessing 100+ foundation models from providers like Anthropic, Meta, Mistral, OpenAI, and others through a single API, with enterprise-grade security and deep AWS ecosystem integration. It's a strong choice for teams already invested in AWS infrastructure.

But Bedrock's enterprise focus comes with overhead — multi-layered pricing, IAM configuration, and SDK setup that can feel heavy if you just want to call a model. Depending on your use case, there are alternatives that offer simpler setup, lower costs, or broader model access.

In this article, you'll learn about five AWS Bedrock alternatives, how they compare, and which one might be the best fit for your project.

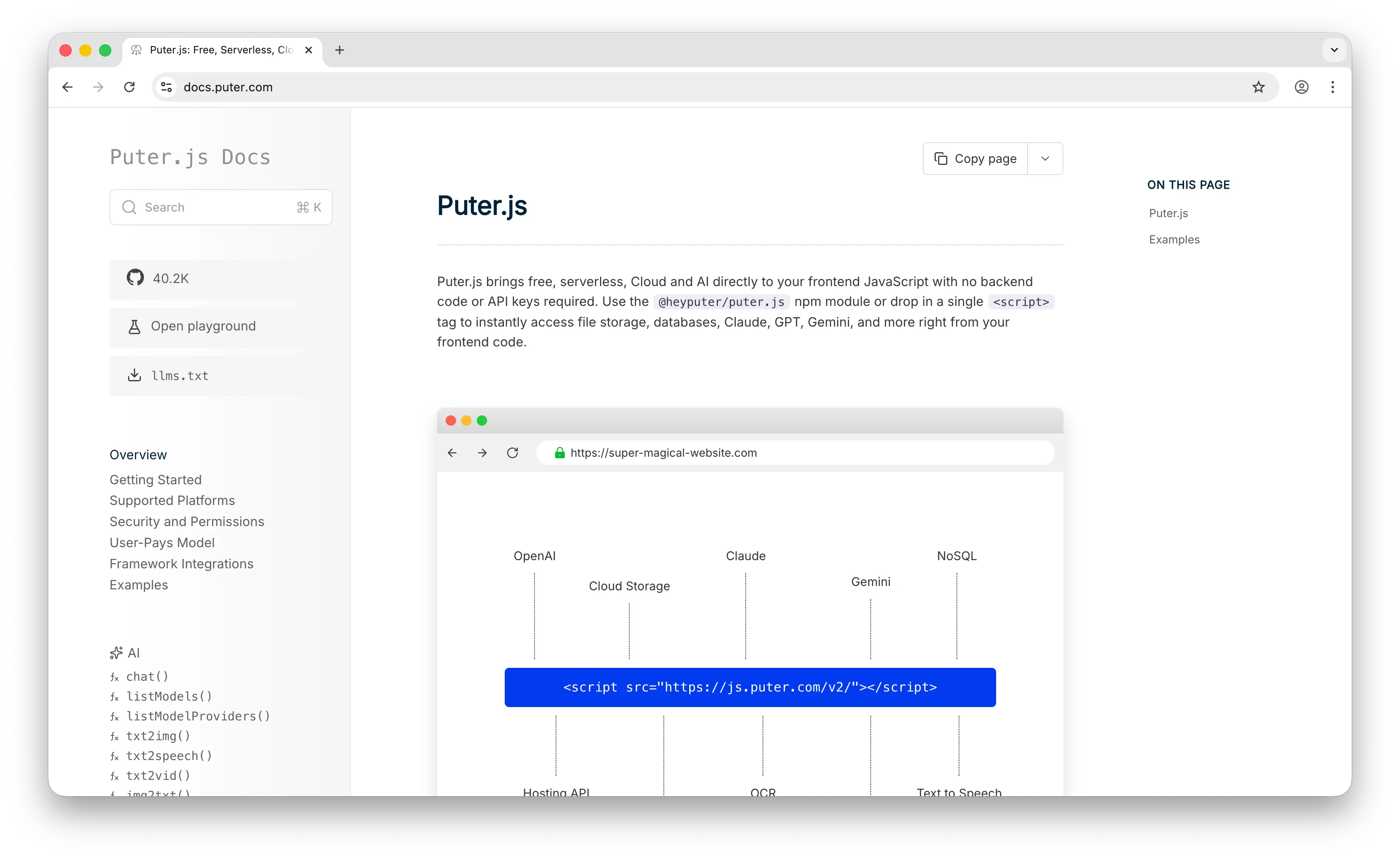

1. Puter.js

Puter.js is a JavaScript library that bundles AI, database, cloud storage, authentication, and more into a single package. Where Bedrock requires an AWS account, IAM credentials, and SDK configuration before you can make your first inference call, Puter.js needs just a single <script> tag. It supports over 400 AI models from providers like OpenAI, Anthropic, Google, Meta, and others, rivaling Bedrock's multi-provider catalog without any of the infrastructure overhead.

What Makes It Different

Puter.js pioneered the User-Pays Model: your app users cover their own AI usage costs through their Puter account. This means developers can add AI features to their apps for free, no API key, no backend, no server-side setup required. This is the polar opposite of Bedrock's model, where the developer absorbs all inference costs.

Puter.js also goes well beyond chat models. It supports image generation, image analysis, video generation, video analysis, OCR, speech-to-text, text-to-speech, and voice changing, capabilities that Bedrock either doesn't support natively or requires separate AWS services for.

Key Differences from AWS Bedrock

Puter.js is primarily designed for frontend web apps. While it works in Node.js, the user-pays model is most natural in a browser context. It does not offer enterprise features like VPC integration, Guardrails, Knowledge Bases, or batch inference, and it doesn't currently support embeddings or fine-tuning.

Comparison Table

| Feature | Puter.js | AWS Bedrock |

|---|---|---|

| API key required | No | Yes (IAM credentials) |

| Pricing model | User-pays (free for devs) | Pay-as-you-go (per token) |

| Markup | At cost | Provider list price (no documented markup) |

| Setup complexity | One script tag | AWS account, IAM, SDK, region config |

| Chat models |  400+ models 400+ models |

100+ models 100+ models |

| Image generation |  |

(Stability AI, Nova Canvas) (Stability AI, Nova Canvas) |

| Video generation |  |

(Nova Reel, Luma AI) (Nova Reel, Luma AI) |

| Audio (TTS/STT) |  |

(Nova Sonic, Whisper via Marketplace) (Nova Sonic, Whisper via Marketplace) |

| Embeddings |  |

|

| Fine-tuning |  |

(select models) (select models) |

| Batch inference |  |

(50% discount) (50% discount) |

| Provisioned throughput |  |

(guaranteed capacity) (guaranteed capacity) |

| Knowledge Bases / RAG |  |

|

| Guardrails |  |

|

| Agents |  |

(AgentCore) (AgentCore) |

| VPC / data residency |  |

|

| Open-source models |  |

|

| Closed-source models |  |

|

| Model update speed | Fast | Moderate |

| Best for | Frontend/web app devs who want zero-cost AI integration | Enterprise teams needing managed infra with full AWS integration |

2. Google AI Studio

Google AI Studio is a free, browser-based development environment for prototyping and building with Google's Gemini models. It provides direct access to the Gemini API, prompt testing, model fine-tuning, and media generation, all in one place.

What Makes It Different

Google AI Studio's biggest draw is its generous free tier. The AI Studio interface itself is completely free, and the underlying Gemini API offers a free tier for several models, making it one of the most cost-effective ways to access frontier AI models. This stands in stark contrast to Bedrock, where every inference call is billed.

The platform is also deeply multimodal, with native access to Gemini models for text, image generation (Imagen), video generation (Veo), text-to-speech, music generation (Lyria), and real-time audio via the Live API.

Key Differences from AWS Bedrock

Google AI Studio is limited to Google's own models (Gemini, Imagen, Veo, Gemma, etc.). You cannot access Claude, Llama, Mistral, or other third-party models. For enterprise features like SLAs and VPC integration, Google steers developers toward Vertex AI, its enterprise-grade counterpart.

Google may also use your prompts to improve its products on the free tier, and free tier quotas have tightened over time, so production use cases will likely need the paid tier.

Comparison Table

| Feature | Google AI Studio | AWS Bedrock |

|---|---|---|

| Pricing model | Free tier + pay-as-you-go | Pay-as-you-go (per token) |

| Free tier |  Free AI Studio + API (with rate limits) Free AI Studio + API (with rate limits) |

$200 in credits for new accounts |

| Markup | None (direct Google pricing) | Provider list price (no documented markup) |

| Multi-provider models |  (Google models only) (Google models only) |

(15+ providers) (15+ providers) |

| Chat models |  (Gemini family) (Gemini family) |

(Anthropic, Meta, Mistral, etc.) (Anthropic, Meta, Mistral, etc.) |

| Image generation |  (Imagen, Gemini) (Imagen, Gemini) |

(Stability AI, Nova Canvas) (Stability AI, Nova Canvas) |

| Video generation |  (Veo) (Veo) |

(Nova Reel, Luma AI) (Nova Reel, Luma AI) |

| Audio / TTS |  (Gemini TTS, Live API) (Gemini TTS, Live API) |

(Nova Sonic) (Nova Sonic) |

| Music generation |  (Lyria) (Lyria) |

|

| Embeddings |  (multimodal embeddings) (multimodal embeddings) |

|

| Fine-tuning |  |

(select models) (select models) |

| Batch inference |  (50% off) (50% off) |

(50% off) (50% off) |

| Provisioned throughput |  |

(guaranteed capacity) (guaranteed capacity) |

| Grounding (web search) |  (Google Search, Maps) (Google Search, Maps) |

(via Agents only) (via Agents only) |

| Knowledge Bases / RAG | Via Vertex AI |  |

| Guardrails | Safety settings per request |  (configurable) (configurable) |

| VPC / data residency | Via Vertex AI |  |

| Vibe coding / app builder |  (Antigravity) (Antigravity) |

|

| Model update speed | Fastest (first-party Gemini) | Moderate |

| Best for | Developers wanting free prototyping and cheap production with Google models | Enterprise teams needing multi-provider access on AWS |

3. OpenRouter

OpenRouter is a unified API gateway that provides access to 300+ AI models from 60+ providers, including Anthropic, OpenAI, Google, DeepSeek, Meta, Mistral, xAI, and more, all through a single, OpenAI-compatible API.

What Makes It Different

OpenRouter's core value proposition is simplicity and breadth. One API key gets you access to every major model, with no AWS account, no IAM configuration, and no SDK lock-in. The API is fully OpenAI-compatible, so most existing code works with just a base URL change.

OpenRouter does not mark up per-token pricing. Instead, it applies a 5.5% fee when you purchase credits. It also offers automatic fallback routing when providers go down, free models with no credit card required, and performance-optimized :nitro variants for lower latency.

Key Differences from AWS Bedrock

OpenRouter is a pure routing layer, not an infrastructure platform. It does not offer Knowledge Bases, Agents, Guardrails, fine-tuning, batch inference, or VPC integration. For teams that primarily need multi-model access without the operational overhead of Bedrock, OpenRouter is significantly easier to adopt.

Comparison Table

| Feature | OpenRouter | AWS Bedrock |

|---|---|---|

| Pricing model | Pay-as-you-go (5.5% credit fee) | Pay-as-you-go (per token) |

| Markup | 5.5% on credit purchase | Provider list price (no documented markup) |

| Free tier | 25+ free models (rate-limited) | $200 in credits for new accounts |

| Model catalog | 300+ models, 60+ providers | 100+ models, 15+ providers |

| API compatibility | OpenAI-compatible | AWS SDK, OpenAI-compatible (Mantle) |

| Setup complexity | Sign up, add credits, get API key | AWS account, IAM, SDK, region config |

| Fallback / routing |  Automatic Automatic |

Cross-region inference Cross-region inference |

| Open-source models |  Extensive Extensive |

|

| Closed-source models |  Extensive Extensive |

|

| Image generation |  |

|

| Embeddings |  |

|

| Fine-tuning |  |

(select models) (select models) |

| Batch inference |  |

(50% discount) (50% discount) |

| Provisioned throughput |  |

(guaranteed capacity) (guaranteed capacity) |

| Knowledge Bases / RAG |  |

|

| Guardrails |  |

|

| Agents |  |

(AgentCore) (AgentCore) |

| VPC / data residency | Regional routing (Enterprise) |  |

| Observability | Usage dashboard, per-key tracking | CloudWatch, usage API |

| Model update speed | Very fast | Moderate |

| Best for | Teams wanting broad model access with simple routing | Enterprise teams needing managed infra on AWS |

4. OpenAI Platform

OpenAI Platform is OpenAI's direct API and developer platform, providing access to the GPT family, o-series reasoning models, DALL·E, Whisper, TTS, Codex, and more.

What Makes It Different

The OpenAI Platform gives you first-party, day-one access to OpenAI's latest models, while Bedrock typically takes weeks or longer to onboard new models from external providers.

OpenAI has also built a comprehensive agent-building ecosystem. The Responses API provides built-in tools for web search, file search, and computer use. The Agents SDK offers orchestration for multi-step workflows. And Codex provides agentic coding capabilities integrated directly into the platform.

The platform covers a wide range of modalities under a single API: text, image generation, image editing, text-to-speech, speech-to-text (Whisper), video generation (Sora), real-time voice, and embeddings.

Key Differences from AWS Bedrock

The OpenAI Platform is limited to OpenAI's own models. You cannot access Claude, Gemini, Llama, or Mistral through it. It also lacks Bedrock's enterprise infrastructure features like VPC integration, Knowledge Bases, configurable Guardrails, and provisioned throughput.

Comparison Table

| Feature | OpenAI Platform | AWS Bedrock |

|---|---|---|

| Pricing model | Pay-as-you-go (per token) | Pay-as-you-go (per token) |

| Markup | None (direct pricing) | Provider list price (no documented markup) |

| Free tier | Free credits for new accounts | $200 in credits for new accounts |

| Multi-provider models |  (OpenAI models only) (OpenAI models only) |

(15+ providers) (15+ providers) |

| Chat models |  (GPT family, o-series) (GPT family, o-series) |

(multi-provider) (multi-provider) |

| Reasoning models |  (o-series) (o-series) |

(via Anthropic, DeepSeek, etc.) (via Anthropic, DeepSeek, etc.) |

| Image generation |  (DALL·E, GPT Image) (DALL·E, GPT Image) |

(Stability AI, Nova Canvas) (Stability AI, Nova Canvas) |

| Video generation |  (Sora) (Sora) |

(Nova Reel, Luma AI) (Nova Reel, Luma AI) |

| Audio (TTS/STT) |  (TTS, Whisper, Realtime API) (TTS, Whisper, Realtime API) |

(Nova Sonic) (Nova Sonic) |

| Embeddings |  |

|

| Fine-tuning |  (supervised + reinforcement) (supervised + reinforcement) |

(select models) (select models) |

| Batch inference |  (50% discount) (50% discount) |

(50% discount) (50% discount) |

| Provisioned throughput |  |

(guaranteed capacity) (guaranteed capacity) |

| Built-in web search |  (Responses API) (Responses API) |

|

| Computer use |  |

(via Claude) (via Claude) |

| Agent framework |  (Responses API, Agents SDK) (Responses API, Agents SDK) |

(AgentCore) (AgentCore) |

| Knowledge Bases / RAG | File search (Responses API) |  (managed) (managed) |

| Guardrails | Moderation API |  (configurable) (configurable) |

| VPC / data residency |  |

|

| Model update speed | Fastest (first-party) | Moderate |

| Best for | Teams building primarily with OpenAI models and agents | Enterprise teams needing multi-provider access on AWS |

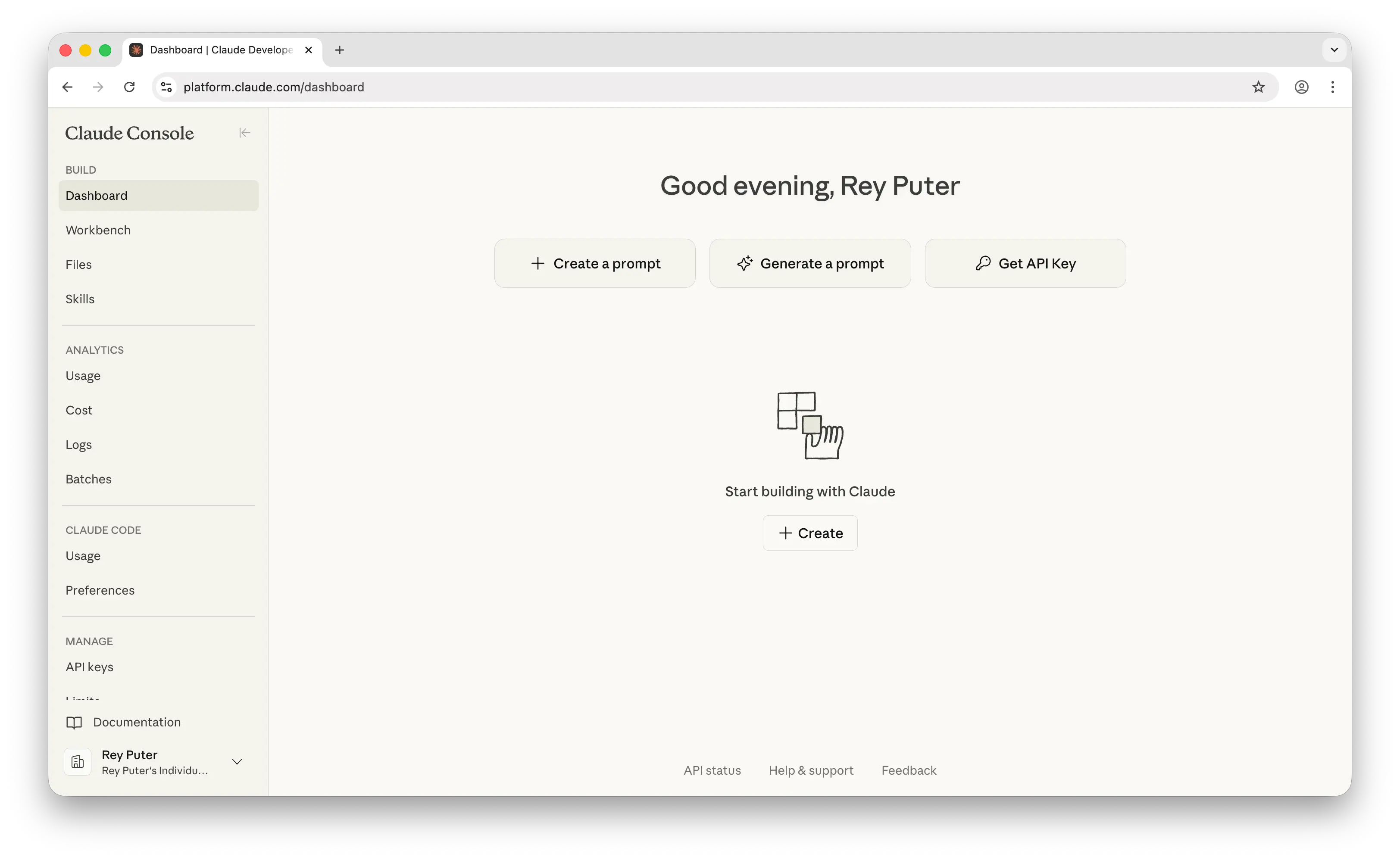

5. Anthropic Console

Anthropic Console is Anthropic's direct API platform for accessing the Claude model family, including Claude Opus, Sonnet, and Haiku.

What Makes It Different

The Anthropic Console provides first-party, direct access to Claude models at the lowest possible price. Going direct means you get new Claude releases immediately, rather than waiting for Bedrock to onboard them.

Claude excels at coding, agentic tasks, and long-document processing, with extended thinking for complex reasoning and a large context window at standard pricing. The platform also offers prompt caching that can reduce input costs by up to 90% for repeated contexts, and a 50% batch inference discount.

Key Differences from AWS Bedrock

The Anthropic Console is limited to Claude models only. There's no access to GPT, Gemini, Llama, Mistral, or any other model family. It also lacks Bedrock's managed services like Knowledge Bases, Guardrails, and managed RAG pipelines. Anthropic does offer tool use and computer use capabilities via the API, but the orchestration layer is left to the developer.

Comparison Table

| Feature | Anthropic Console | AWS Bedrock |

|---|---|---|

| Pricing model | Pay-as-you-go (per token) | Pay-as-you-go (per token) |

| Markup | None (direct pricing) | Provider list price (no documented markup) |

| Free tier | Small credits for new accounts | $200 in credits for new accounts |

| Multi-provider models |  (Claude only) (Claude only) |

(15+ providers) (15+ providers) |

| Chat models |  (Opus, Sonnet, Haiku) (Opus, Sonnet, Haiku) |

(multi-provider) (multi-provider) |

| Context window | 1M tokens (standard pricing) | Varies by model |

| Extended thinking |  |

(via Claude on Bedrock) (via Claude on Bedrock) |

| Computer use |  |

(via Claude on Bedrock) (via Claude on Bedrock) |

| Image generation |  |

|

| Video generation |  |

|

| Audio (TTS/STT) |  |

|

| Embeddings |  |

|

| Fine-tuning |  |

(select models) (select models) |

| Batch inference |  (50% discount) (50% discount) |

(50% discount) (50% discount) |

| Provisioned throughput |  |

(guaranteed capacity) (guaranteed capacity) |

| Prompt caching |  (90% off cache hits) (90% off cache hits) |

(varies by model) (varies by model) |

| Knowledge Bases / RAG |  |

|

| Guardrails | Built-in safety (constitutional AI) |  (configurable) (configurable) |

| Agents | Tool use + computer use API |  (AgentCore) (AgentCore) |

| VPC / data residency | Inference geo routing |  |

| Usage / cost API |  (granular Admin API) (granular Admin API) |

CloudWatch, usage dashboard |

| Fast mode |  |

|

| Model update speed | Fastest (first-party) | Moderate |

| Best for | Teams building primarily with Claude for coding and agents | Enterprise teams needing multi-provider access on AWS |

Which Should You Choose?

Choose Puter.js if you're building a frontend web app and want to add AI features without any backend, API keys, or usage costs. The user-pays model is ideal for developers who don't want to manage infrastructure or worry about scaling bills. The trade-off: no enterprise features, no embeddings, no fine-tuning, and your users need a Puter account to cover their own usage.

Choose Google AI Studio if you want some of the cheapest production pricing in the industry and are comfortable building exclusively with Google's Gemini models. The lightweight model tiers are unmatched for high-volume workloads, and the free tier is useful for prototyping. The trade-off: no third-party models, free tier quotas can change, and enterprise features require migrating to Vertex AI.

Choose OpenRouter if you need access to the broadest model catalog (300+ models from 60+ providers) through a single, OpenAI-compatible API. The automatic fallback routing, free model tier, and near-zero setup make it the simplest multi-provider gateway available. The trade-off: no fine-tuning, no batch inference, no managed RAG or agents, and the 5.5% credit fee adds up at scale.

Choose OpenAI Platform if you're building primarily with GPT models, o-series reasoning models, or the Codex agentic coding tools and want first-party, day-one access to the latest releases. The Responses API and Agents SDK provide a tightly integrated agent-building experience. The trade-off: you're locked into OpenAI models only, there's no VPC integration, and you'll need a separate solution if you ever want Claude, Gemini, or open-source models.

Choose Anthropic Console if Claude is your primary model and you want the best possible pricing, deepest prompt caching discounts, and fastest access to new Claude releases. The large context window at standard pricing and batch discount make it exceptionally cost-effective for coding agents and long-document workflows. The trade-off: Claude models only, no image/video/audio generation, no managed RAG, and the orchestration layer for agents is left to you.

Stick with AWS Bedrock if you need multi-provider model access with enterprise-grade infrastructure: VPC integration, data residency controls, Knowledge Bases for managed RAG, configurable Guardrails, AgentCore for agent deployment, provisioned throughput for guaranteed capacity and latency SLAs, and deep integration with the broader AWS ecosystem (S3, Lambda, CloudWatch, IAM). None of the five alternatives above offer provisioned throughput with committed capacity guarantees. Bedrock is the right choice for organizations already invested in AWS that need compliance, governance, and operational maturity at scale.

Conclusion

The best AWS Bedrock alternatives are Puter.js, Google AI Studio, OpenRouter, OpenAI Platform, and Anthropic Console. Each takes a different approach: Puter.js eliminates developer costs entirely, Google AI Studio offers the best free tier and cheapest production pricing, OpenRouter provides the broadest multi-provider access, OpenAI Platform delivers the most integrated first-party experience, and Anthropic Console offers the deepest discounts for Claude-heavy workloads. The right choice depends on whether you prioritize cost, model variety, enterprise features, or simplicity.

Related

Free, Serverless AI and Cloud

Start creating powerful web applications with Puter.js in seconds!

Get Started Now